If you’ve already signed up for Amazon Web Services (AWS), you can start using Amazon EC2 immediately. You can open the Amazon EC2 console, click Launch Instance, and follow the steps in the launch wizard to launch your first instance. Read more »

Posted by gol & filed under Amazon AWS.

Amazon Elastic Compute Cloud (Amazon EC2) provides scalable computing capacity in the Amazon Web Services (AWS) cloud. Using Amazon EC2 eliminates your need to invest in hardware up front, so you can develop and deploy applications faster. Read more »

Posted by gol & filed under Cisco CCNA.

Posted by gol & filed under Cisco CCNA.

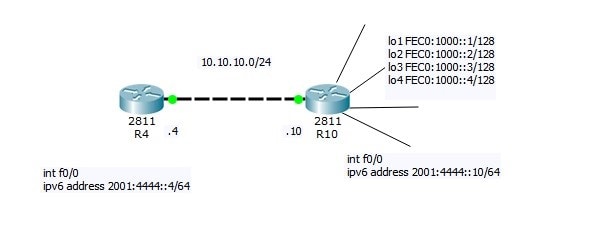

Now I will do small Lab:

R4=10.10.10.4

R10=10.10.10.10 connected via LAN link

Read more »

Posted by gol & filed under Cisco CCNA.

Follow @ASM_Educational

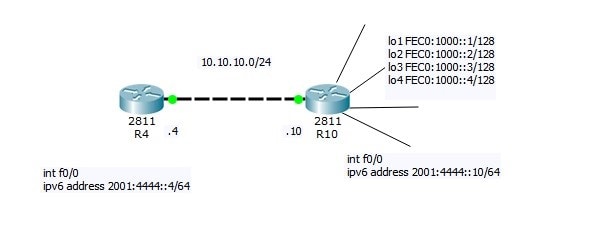

Source Logical Operations

Get our complete tutorial in PDF

Related Links:

Configuring IPv4

Configuring IPv6

Want more information on how to become Cisco CCNA Certified? Learn more!

Join our Cisco CCNA facebook study group!

Posted by gol & filed under Cisco CCNP.

Follow @ASM_Educational

Source Logical Operations

Get our complete tutorial in PDF

Configuring IPv6

- Overview of IPv6

- Implement IPv6 Addressing

- Implement IPv6 and IPv4

- Transition from IPv4 to IPv6

Posted by gol & filed under Cisco CCNA.

Follow @ASM_Educational

Source Logical Operations

Get our complete tutorial in PDF

Configuring IPv6

- Overview of IPv6

- Implement IPv6 Addressing

- Implement IPv6 and IPv4

- Transition from IPv4 to IPv6

Posted by gol & filed under Cisco CCNA.

Follow @ASM_Educational

Source Logical Operations

Get our complete tutorial in PDF

Configuring IPv4

- Overview of the TCP/IP Protocol Suite

- Describe IPv4 Addressing

- Implement Subnetting and Supernetting

- Configure and Troubleshoot IPv4

Posted by gol & filed under Cisco CCNA.

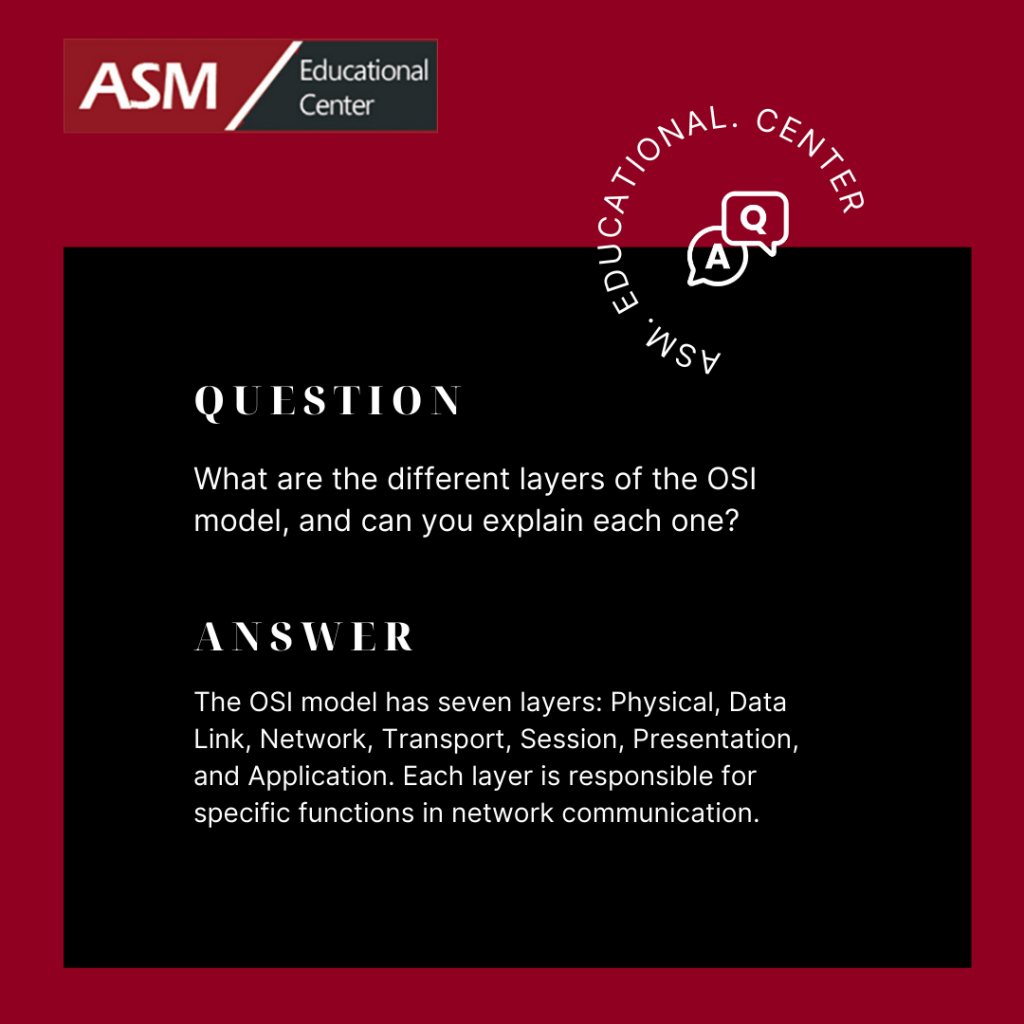

Description of OSI layers

The recommendation X.200 describes seven layers, labeled 1 to 7. Layer 1 is the lowest layer in this model.

Posted by gol & filed under Cisco CCNA.

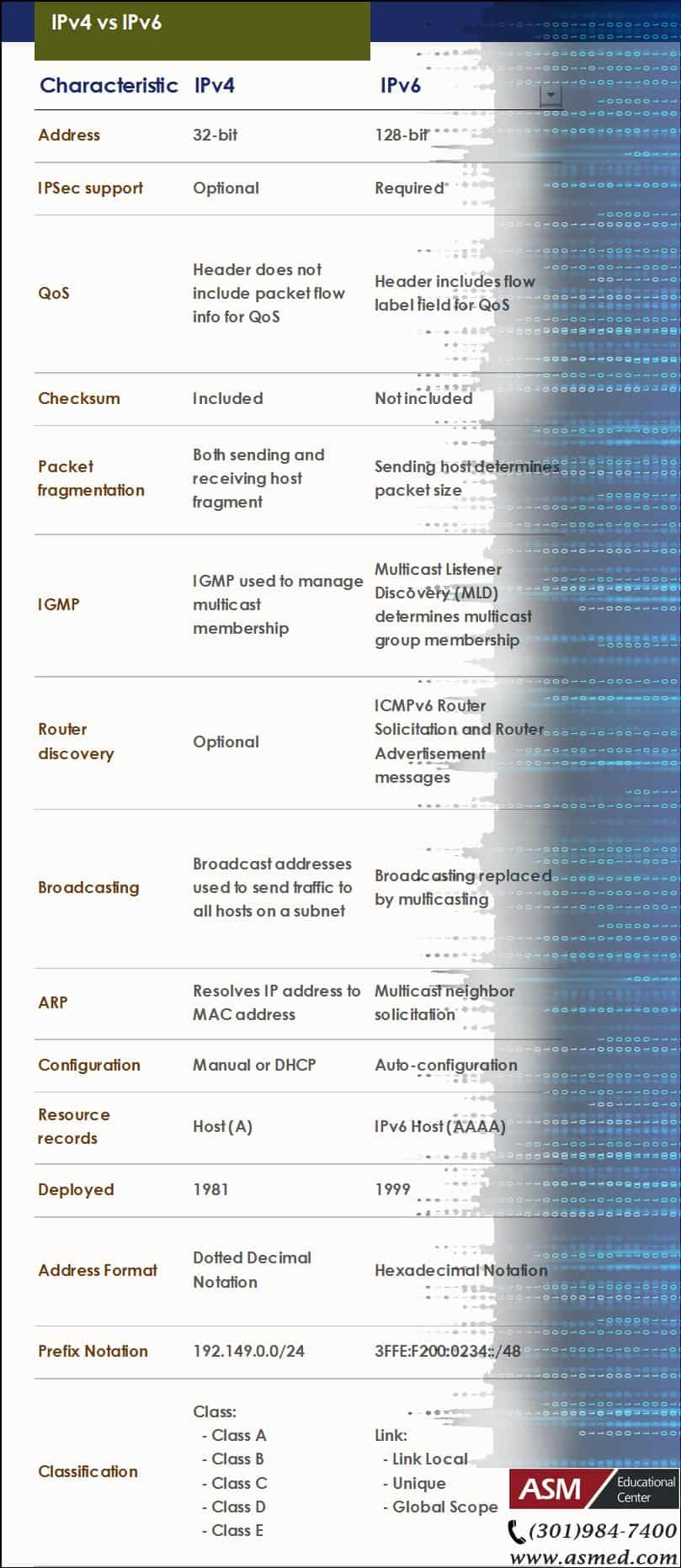

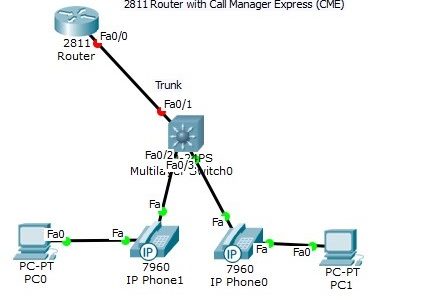

The Trunk port between the router and switch had to be manually configured using sub-interfaces. Note, however, that the DATA vlan traffic and the phone VOICE vlan traffic for each host is carried over the same link (multiple vlan traffic over the same port). Read more »

Posted by gol & filed under Cisco CCNA.

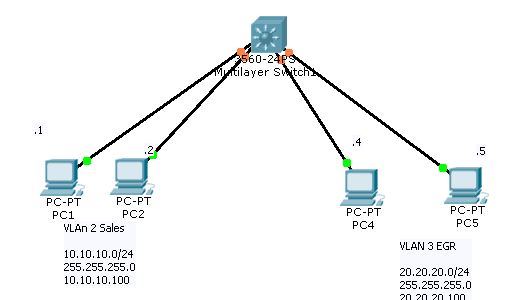

Build the following topology in packet tracer. After testing our configuration, we will deploy on devices.

Read more »

Posted by gol & filed under Cisco CCNA.

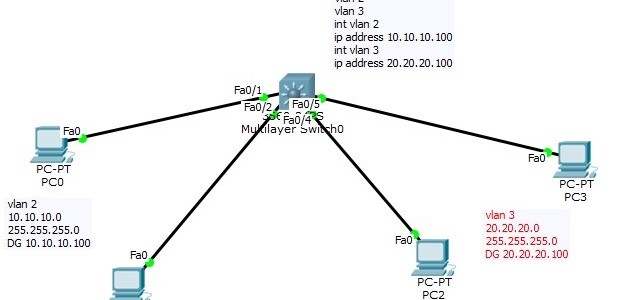

Layer 3 Switch

Now that we have seen how a “router on a stick” works, we can introduce the Layer 3 switch. In the “router on a stick” topology, what if we could bring the router inside the switch? Read more »

Posted by gol & filed under Cisco CCNA.

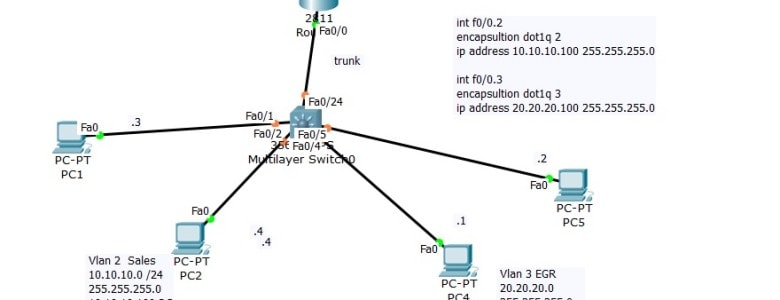

Using DHCP Server with Inter-Vlan Routing (Router on stick)

On Last Video we talked about the router on stick that is we made sure that client from Network 10.10.10.0 can communicate with Network 20.20.20.0

Posted by gol & filed under Cisco CCNA.

Setting up VLAN’s

On a new switch, all the ports are in VLAN 1 by default. We just plug in the Ethernet cables and the devices can communicate. Furthermore, all the ports are in the up/up (administratively up) mode. Read more »

Posted by gol & filed under CompTIA Linux+.

In order to make troubleshooting as easy as possible, you should always use an organized methodology. Using simple best practices will do just that. Read more »

Posted by gol & filed under CompTIA Linux+.

In order to keep your Linux system running smoothly, it is vital to maintain it properly.

Read more »

Posted by gol & filed under CompTIA Linux+.

As with any other operating system, administration efforts are necessary for any linux system. These include the following tasks: Read more »

Posted by gol & filed under CompTIA Linux+.

Configuring your Xwindows

No matter what desktop environment you chose, it is most likely that it will use the Xwindows architecture. Read more »

Posted by gol & filed under CompTIA Linux+.

Media

Linux installation can be done using a variety of different media. Each installation method has different pros and cons depending on the environment you have. Here are some examples: Read more »

Posted by gol & filed under CompTIA Linux+.

Linux Uses

Linux is a pretty flexible operating system. Although it has got a lot of credibility over the years as a stable server platform, it is also an excellent desktop platform. Databases, mail servers as well as many appliances can be installed. Read more »

Posted by gol & filed under CompTIA Linux+.

Linux is a 32 bit open source operating system. It is based on the very popular Unix operating system and it’s code is freely available (thus explaining the “open source” label as opposed to closed source where the code is not available freely). Read more »

Posted by gol & filed under Amazon AWS.

Amazon Simple Storage Service is storage for the Internet. It is designed to make web-scale computing easier for developers. Read more »

Do You Have What It Takes for a Career in Technology?

Information Technology (IT) has become such a widespread career choice. But few people actually know what IT is and what its discipline entails.

The digital world may tempt you to jump on the information technology wagon. But is it the right choice for you? Talk to one of our advisers and take our IT Assessment Test to see if IT is the right field for you.

I’m new to IT, what certifications are best for me?

Whether you’re a college student looking to advance your career ahead of your peers or from another sector trying to change your career. The Information Technology field offers plenty of opportunities regardless of where your true passion lies. But how can you boost careers — and fast? Not every career needs years to launch. In the tech industry, an education will help — but it isn’t 100 percent necessary. Take for instance the IT field. A handful of entry-level positions in this burgeoning field simply require a certification.

But you may be asking, which IT certification should I get first?

Build your foundation first

If you’re just starting out, you’d want to pursue IT certifications that acquaint you with how to maximize the use of computers, mobile and cloud technology in a business environment first.,

There are certifications that are Ideal for students and professionals who don’t have prior IT experience. These certifications are designed to get students and career changers up to speed on how computers, operating systems and networks function, providing the building blocks of IT.

IT certifications to start your career

CompTIA A+

Your ticket to help desk and technical support jobs. CompTIA A+ shows you know how to troubleshoot common tech problems in corporate environments.

Jobs related to CompTIA A+: Help Desk Technician, Technical Support Specialist, Systems Administrator, IT Technician, IT Assistant

Learn more about our: CompTIA A+ Bootcamp.

The ITIL® 4 Foundation

The ITIL® 4 (version 4) Foundation is the newest entry level Certification Course for IT Service Management Best Practices. It is designed to help businesses manage risks, strengthen customer relations, establish cost-effective practices and build stable IT environments for growth, scale and change.

Jobs related to ITIL 4 Foundation: Process coordinator, Incident Coordinator, Configuration Analyst, Service Desk Level 1, Support Specialist.

Learn more about our: ITIL 4 Bootcamp

IT certifications to explore specialties

CompTIA Network+

Proves your knowledge about managing enterprise networks, solving networking issues, troubleshooting network devices and keeping tabs on network security.

Jobs related to CompTIA Network+: Network Support Specialist, Network Administrator, Systems Administrator, Systems Analyst, Network Engineer

Jobs related to CompTIA Network+: Network Administrator, Network Engineer, Help Desk Support, Service Technician, IT support Specialist

Learn more about our: CompTIA Network+ Bootcamp

CompTIA Security+

Great for tech support and computer networking professionals who want to get into cybersecurity. This certification proves you know how to secure networks, keep digital data confidential and ward off hackers.

Jobs related to CompTIA Security+: Cybersecurity Specialist, Security Administrator

Learn more about our: CompTIA Security+ Bootcamp

AWS Certified Solutions Architect – Associate

Solutions architects optimize the use of the AWS Cloud by understanding AWS services and how these services fit into cloud-based solutions. This certification is a great introduction to cloud computing.

Jobs related to AWS Certified Solution Architect – Associate: Cloud Architect, Cloud Engineer, Operational Support Engineer, Cloud Software Engineer, System Integrator — Cloud

Learn more about our: AWS Certified Solution Architect – Associate Bootcamp

Whether you’re a college student or a professional who wants to change a career, IT certifications shows employers that you have what it takes and have the skills needed for the IT roles in their organizations.

College students can also avail a federally funded scholarship for your certifications. Learn more

Posted by admin & filed under CompTIA A+, MICROSOFT MTA O/S.

Security Settings

CompTIA A+ Exam objectives 2.6

(Compare and contrast the differences of basic Microsoft Windows OS security settings)

User and Groups

There are different levels of user accounts built into the Windows operating system. There are administrators, guests, and standard users. An administrator is the super-user of the Windows operating system. If you have administrative rights, then you effectively can control everything about the operating system.

There are also guest users in the Windows operating system. These guest users are disabled by default. But if you do enable the guest user, they will have limited access to the operating system. The majority of people that log in to Windows are standard users. These are people that are browsing the internet or working on spreadsheets or word processing documents. A standard user does not have full and complete access to the operating system, but they are able to use the operating system to perform day-to-day tasks.

There are also groups built into Windows. Some of these groups can be created to assign rights and permissions to others, and other groups are built into the operating system. A good example of this is the power users group that provides additional rights and permissions to a standard user without giving them all of the permissions that may be assigned to an administrator. When you access a file in the Windows operating system, your access to that file may be controlled through NTFS permissions or share permissions.

NTFS vs. Share Permissions

NTFS permissions are permissions assigned to the file system itself. This means if you’re accessing a file locally on the computer, the NTFS permissions will apply. And if you’re accessing that file across the network using a share, these NTFS permissions will also apply to you as well. There is a separate group of permissions that are associated with users connecting across a share. This means you can have one set of permissions for people who are accessing this file locally and a completely different set of permissions for somebody accessing it across the network.

As you can imagine, this could create a conflict. What if the NTFS permission is set to deny access, but the share permission is set to allow access? Whenever you have that type of conflict, the most restrictive setting will win, which means if the deny is set on this file on either one of those permissions, then they deny will beat that allow permission that may be somewhere else. NTFS permissions are inherited from parent objects in the file system, which means you don’t have to manually assign NTFS permissions to every single file. It will simply use the permissions assigned to the parent object.

If you move that file to a different volume, then the permissions will be associated with where you put it on that volume. If you move that file within the same volume, there is simply a pointer that’s changed in the file system, which means it will keep the permissions if you’re moving it within the same volume. In this view, we’re looking at two different sets of permissions that are pointing to the same folder. This would be the folder under Users, Professor, Documents, and Reports.

You can see there may be NTFS permissions that provide full access to this particular folder. But if you were to look at the share permissions, anybody connecting across the network would only have read access to this particular folder. There are a number of shares that are created automatically by the operating system during the installation process. These are administrative shares, and most of these shares are hidden from view.

For example, any share that has a dollar sign at the end of it is automatically hidden by the operating system. So a share that had a C$ would be the share for the entire C drive, but it would be hidden by other people that are connecting to the system. Another good example of administrative shares are the ADMIN$ share and the PRINT$ share. If you wanted to view the shares available on your system, you can go to the command line and use the net share command to list out all the share names and the resources associated with that share.

Shared Files and Folders

We mentioned earlier that permissions associated with a file in the file system can have all of those permissions inherited from a parent object. If you were to manually change the permissions for that file in the file system, those permissions would be called explicit permissions. Here’s an example of inherited permissions. Here’s a music folder on my Windows computer. And you can see there are a number of folders underneath the Music folder. This means that the Music folder would have the parent permissions, and the folders underneath the Music folder would have the child permissions.

If we were to set permissions on the Music folder to allow access, we won’t have to go to each individual folder to also allow access, because all of those permissions will be inherited from the parent object. If we configured the Music folder to provide access, then access to all of the child folders would also be allowed, because those permissions are inherited from the parent object. We can override these inherited permissions by changing the permissions ourselves. And when we change them, they would be explicit permissions.

Let’s take the example of our music folder. If we set up a deny permission to our music folder, then that particular set of permissions would be inherited by all of the child objects. But there may be a child folder that we would like to provide access to others, and we can explicitly define what folder we would like to assign. So even though all of the other permissions were inherited, we can specify our own permissions, and those would be explicit permissions.

Learn more about our CompTIA A+ Certification

Credits: Professor Messor

Posted by admin & filed under CompTIA A+, MICROSOFT MTA O/S.

System Utilities

CompTIA A+ Exam objectives 1.5

(Given a scenario, use Microsoft operating system features and tools.)

What are system utilities?

Utility programs are designed to carry out specific tasks. The tasks to be carried out are not typically performed by the operating system as part of its day to day operating of the system. Utility programs are designed for more specific purposes. Common examples of utility software include anti-virus software, disk defragmenters and system restoration.

Here is a picture example of some utilities, some of these will be covered, but there are some more important ones that will be explained for this lesson.

Here are the 12 system utilities needed for the A+ exam:

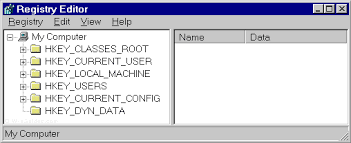

REGEDIT

The Windows Registry serves as an archive for collecting and storing the configuration settings of Windows components, installed hardware/software/application and more. A Windows component, hardware or a software, retrieves the registry entries or keys relating to it, every time it is started. It also modifies the registry entries or keys corresponding to it, in its course of execution. When keys are added to the registry, the data are sorted as computer-specific data or user-specific data in order to support multiple users.

The Regedit command launches regedit.exe

COMMAND

This command launches a standard command prompt for the user.

Services.msc

This command in the command console launches the services console.

MMC

MMC is a centralized data base that contains many tools which are typically scattered, and brings them all together so that the user may select which ones are needed.

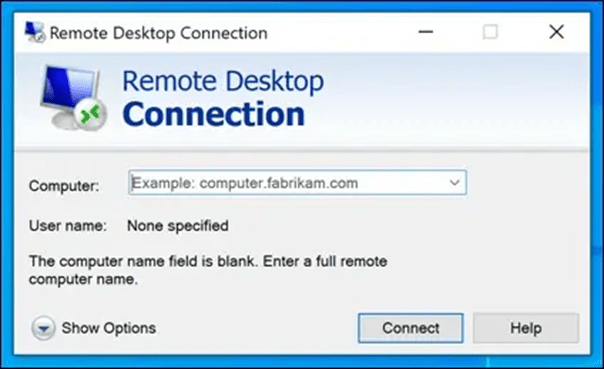

MSTSC

This program allows a PC connected by a remote desktop sessions to be able to edit the config files of different PCs using the RDP.

NOTEPAD

Notepad is a secure and reliable text editor in Windows.

EXPLORER

Explorer is Window’s file management system. It does many actions, such as creating, copying and renaming files and folders.

MSINFO32

Essentially launches system information. Windows says this :

You can use the MSINFO32 command-line tool switches to do all of the following:

- Use System Information from a batch file

- Create .nfo or .txt files that contain information from specified categories.

- Open System Information and display only specific categories.

- Save a file silently (without opening System Information).

- Start System Information connected to a remote computer.

- Create a shortcut that opens System Information in a frequently-used configuration.

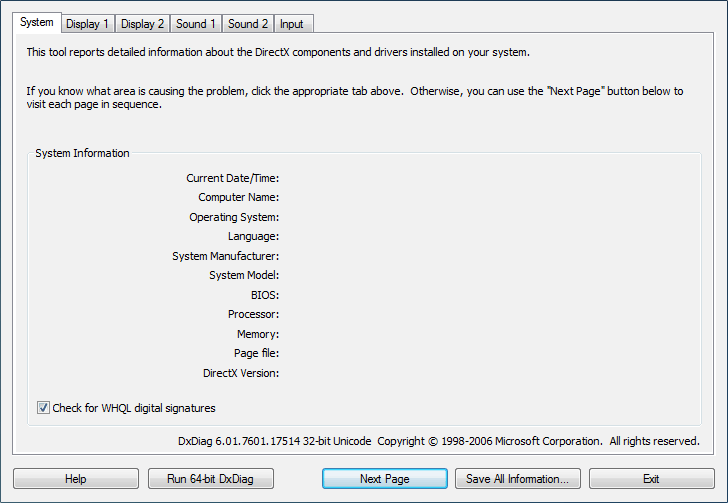

DxDiag

This tool can be used to collect information about DirectX sound and video. Can help for troubleshooting a problem.

Disk Defragmenter

Rearranges the file fragments on a disk into contiguous clusters to be able to read them faster.

System Restore

This utility can create system images, and then restores the system when asked by user.

Windows Update

Can be used to manage software and security issues, and allows microsoft to fix them quickly and uniformly.

Learn more about our CompTIA A+ Certification

Credits: HN Computing , Comodo , CertBlaster , Windows Help

Posted by admin & filed under CompTIA A+, MICROSOFT MTA O/S.

Server Roles

CompTIA A+ Exam objectives 2.5

(Summarize the properties and purposes of services provided by networked hosts.)

What do server roles achieve?

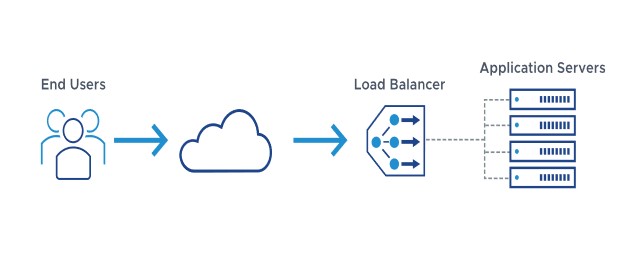

Server roles allow there to be more convenient or efficient options of doing specific tasks, such as accessing the internet, or using the printer.

Here are the 9 different types covered by CompTIA:

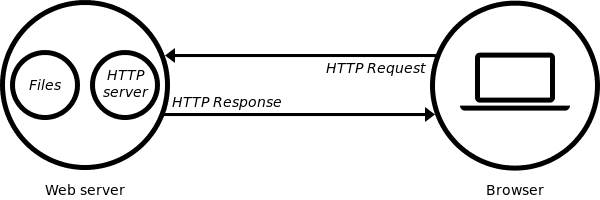

Web server

At the most basic level, whenever a browser needs a file which is hosted on a web server, the browser requests the file via HTTP. When the request reaches the correct web server (hardware), the HTTP server (software) accepts the request, finds the requested document (if it doesn’t then a 404 response is returned), and then sends it back to the browser, also through HTTP.

File server

A high-speed computer in a network that stores the programs and data files shared by it’s users. It acts like a remote disk drive. The difference between a file server and an application server is that the file server stores the programs and data, while the application server runs the programs and processes the data.

Print server

A computer in a network that controls one or more printers. The function is typically part of the operating system but may be an add-on utility that stores the print-image output from users’ machines and feeds it to the printer one job at a time. The computer and its printers are known as a “print server” or a file server with “print services.”

DHCP server

Dynamic Host Configuration Protocol (DHCP) is a network protocol that enables a server to automatically assign an IP address to a computer from a defined range of numbers (that is, a scope) configured for a given network.

DNS server

A dedicated server or a service within a server that provides DNS name resolution in an IP network. It turns names for websites and network resources into numeric IP addresses. DNS servers are used in large companies, in all ISPs and within the DNS system in the Internet, a vital service that keeps the Internet working. They are set up by network administrators and typically do not exist in the very small business or home.

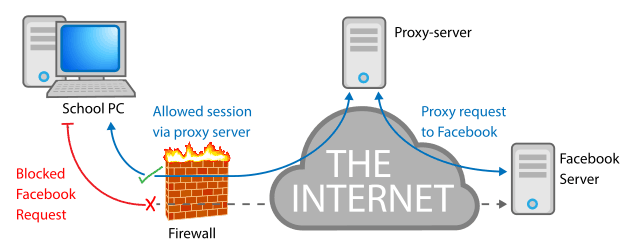

Proxy server

A proxy server, also known as a “proxy” or “application-level gateway”, is a computer that acts as a gateway between a local network (for example, all the computers at one company or in one building) and a larger-scale network such as the internet. Proxy servers provide increased performance and security.

A proxy server works by intercepting connections between sender and receiver. All incoming data enters through one port and is forwarded to the rest of the network via another port. By blocking direct access between two networks, proxy servers make it much more difficult for hackers to get internal addresses and details of a private network.

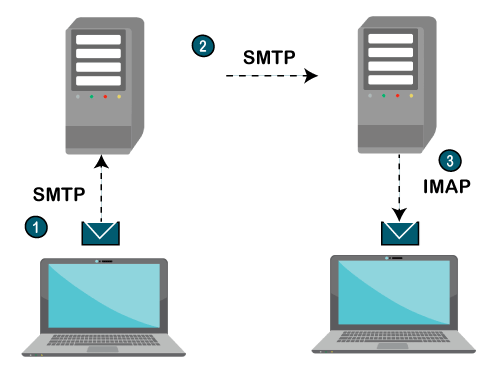

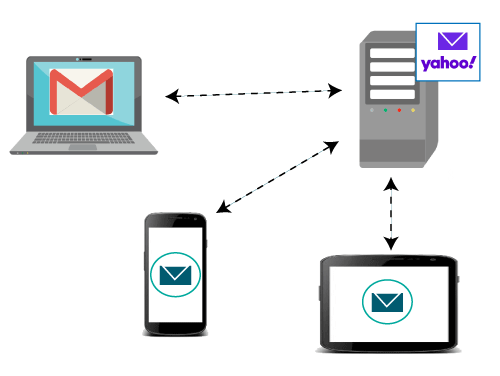

Mail server

A mail server (or email server) is a computer system that sends and receives email. In many cases, web servers and mail servers are combined in a single machine.

Authentication server

A device used in network access control. An authentication server stores the usernames and passwords that identify the clients logging in, or it may hold the algorithms for token access. For access to specific network resources, the server may itself store user permissions and company policies or provide access to directories that contain the information.

syslog

A protocol for transmitting event messages and alerts across an IP network. Messages are sent by the operating system or application at the start or end of a process or to report the current status of a process. Initially developed for the Unix sendmail application, syslog became commonly used in all environments and was made an IETF standard in 2001.

Learn more about our CompTIA A+ Certification

Posted by admin & filed under CompTIA A+, MICROSOFT MTA O/S.

Introduction to Cloud Computing

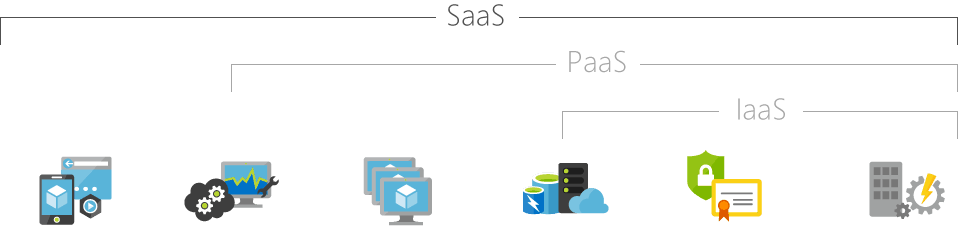

Simply put, cloud computing is the delivery of computing services—including servers, storage, databases, networking, software, analytics, and intelligence—over the Internet (“the cloud”) to offer faster innovation, flexible resources, and economies of scale. You typically pay only for cloud services you use, helping you lower your operating costs, run your infrastructure more efficiently, and scale as your business needs change.

IaaS

SaaS

PaaS

Infrastructure as a Service

Software as a Service

Platform as a Service

Infrastructure as a Service, sometimes abbreviated as IaaS, contains the basic building blocks for cloud IT and typically provide access to networking features, computers (virtual or on dedicated hardware), and data storage space. Infrastructure as a Service provides you with the highest level of flexibility and management control over your IT resources and is most similar to existing IT resources that many IT departments and developers are familiar with today.

Software as a Service provides you with a completed product that is run and managed by the service provider. In most cases, people referring to Software as a Service are referring to end-user applications. With a SaaS offering you do not have to think about how the service is maintained or how the underlying infrastructure is managed; you only need to think about how you will use that particular piece software. A common example of a SaaS application is web-based email where you can send and receive email without having to manage feature additions to the email product or maintaining the servers and operating systems that the email program is running on.

Platforms as a service remove the need for organizations to manage the underlying infrastructure (usually hardware and operating systems) and allow you to focus on the deployment and management of your applications. This helps you be more efficient as you don’t need to worry about resource procurement, capacity planning, software maintenance, patching, or any of the other undifferentiated heavy lifting involved in running your application.

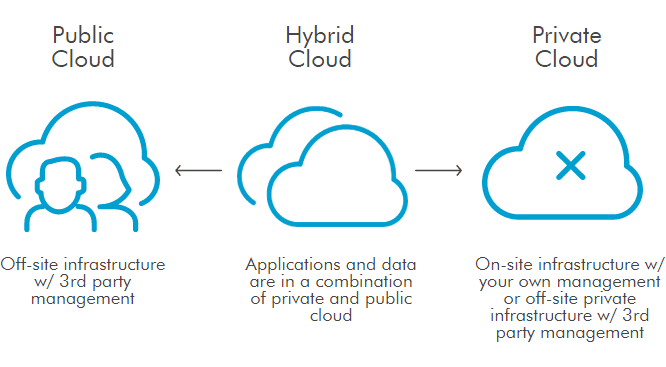

Cloud Computing Deployment Models

Public

-

It may be owned, managed, and operated by a business, academic, or government organization, or some combination of them.

-

Services are delivered over a network which is open for public usage.

Private

-

Exclusive user by a single organization comprising multiple consumers (e.g. business units).

-

The platform for cloud computing is implemented on a cloud-based secure environment that is safeguarded by a firewall which is under the governance of the IT department that belongs to the particular customer.

Hybrid

- The cloud infrastructure is a composition of two or more distinct cloud infrastructures (private,community, or public) that remains unique entities, but are bound together by standardized or proprietary technology that enables data and application portability.

Community

-

Provisioned for exclusive user by a specific community of consumers from organizations that have shared concerns.

-

It may be owned, managed, and operated by one or more of the organizations in the community, a third party, or some combination of them and it may exist on or off premises.

-

The setup is mutually shared between many organizations that belong to a particular community.

Credits: Microsoft Azure ,Amazon AWS, U.S DOI, Salesforce

Posted by Ajmal Ward & filed under CompTIA Security+, MICROSOFT MTA SECURITY.

Major Security Breaches of 2024: What You Need to Know

1. LastPass Breach (2024)

Date of Discovery: January 2024

In early 2024, LastPass, one of the leading password management platforms, suffered another breach, following a significant incident in 2022. Hackers infiltrated user vaults, gaining access to encrypted data. While the data remains encrypted, this breach raised concerns about the overall safety of sensitive information stored in password managers.

Impact:

- Encrypted user data compromised, including passwords.

- Users were urged to update master passwords and enable multi-factor authentication (MFA).

2. T-Mobile Data Breach (2024)

Date of Discovery: March 2024

T-Mobile experienced a significant data breach in March, affecting millions of customers. Cybercriminals accessed personal customer data, including phone numbers and billing information, through unauthorized access to the network.

Impact:

- Over 40 million customers affected.

- Exposure of personal details, but no financial data was compromised.

3. U.S. Health Insurance Data Breach (2024)

Date of Discovery: April 2024

A major U.S. health insurance provider reported a breach that exposed millions of customers’ personal health data. The breach occurred after hackers gained unauthorized access to sensitive medical and insurance information stored within the company’s system.

Impact:

- Over 30 million individuals affected.

- Exposed medical records, insurance information, and personal health data.

4. Microsoft Exchange Server Vulnerability Exploited (2024)

Date of Discovery: May 2024

Cybercriminals took advantage of a vulnerability in Microsoft Exchange Server, gaining unauthorized access to emails and sensitive company data. Although patches were rolled out quickly, many organizations were impacted before they had a chance to update their systems.

Impact:

- Thousands of organizations worldwide affected.

- Critical company data, including emails, exposed.

5. Volkswagen Group of America (VWoA) Data Breach (2024)

Date of Discovery: June 2024

Volkswagen’s U.S. division experienced a data breach in June, exposing millions of customer records. The breach involved unauthorized access to a system that stored sensitive information such as vehicle details, customer names, and addresses.

Impact:

- More than 3 million customer records compromised.

- Affected customers were notified and offered identity protection services.

6. UK’s National Health Service (NHS) Data Breach (2024)

Date of Discovery: July 2024

In July, the NHS reported a breach that compromised patient records via an external partner’s network. This breach exposed confidential medical records, and the stolen data raised concerns about privacy in healthcare systems.

Impact:

- Over 2 million patient records exposed.

- Ongoing efforts to secure patient data and prevent future breaches.

7. Twitter Data Breach (2024)

Date of Discovery: August 2024

A Twitter data breach in August involved hackers exploiting vulnerabilities in Twitter’s API to gain access to personal user information, including phone numbers and email addresses.

Impact:

- Affected over 200 million users.

- Personal details, including phone numbers and email addresses, exposed.

8. Uber Data Breach (2024)

Date of Discovery: September 2024

Uber was hit by a ransomware attack that targeted internal company data. This attack compromised sensitive business information, employee data, and customer details. The breach is believed to have been orchestrated by a hacker group with ties to larger cybercrime syndicates.

Impact:

- Exposed sensitive business data, including financial and customer information.

- Uber worked quickly to contain the breach and strengthen its cybersecurity measures.

9. Ransomware Attack on U.S. Schools (2024)

Date of Discovery: October 2024

A coordinated ransomware attack affected multiple U.S. school districts, disrupting online learning and encrypting educational systems. The attack forced many schools to shut down temporarily, affecting hundreds of thousands of students.

Impact:

- More than 500,000 students were affected by data loss and service disruption.

- Several districts opted not to pay the ransom, instead focusing on rebuilding and strengthening defenses.

10. Australian Broadcasting Corporation (ABC) Breach (2024)

Date of Discovery: November 2024

ABC in Australia suffered a significant data breach involving its internal systems, where hackers accessed sensitive documents and media plans. It’s believed the attackers may have had political motivations linked to espionage.

Impact:

- Exposure of internal documents, including media strategies and unbroadcasted stories.

- ABC has implemented further security measures to protect its data.

Are you looking to break into the exciting field of Cybersecurity? Join our 5-day CompTIA Security+ Boot camp Training and build your Cybersecurity knowledge and skills.

Posted by Ajmal Ward & filed under CompTIA Security+, MICROSOFT MTA SECURITY.

What's New in the Latest Version of CompTIA Security+ (SY0-701)?

The CompTIA Security+ certification has received a major refresh with the new SY0-701 version, reflecting the latest trends in cybersecurity. Here’s a quick look at what’s new:

- Cloud and Hybrid Security: Greater focus on securing cloud and hybrid environments, ensuring professionals can manage modern infrastructures.

- Threat Intelligence: Expanded coverage on threat intelligence, helping organizations anticipate and counter potential attacks.

- Advanced Security Architectures: Introduction to advanced frameworks like Zero Trust, emphasizing secure design and implementation.

- Automation & AI: Increased emphasis on automation and AI in security operations, from threat detection to ethical considerations.

- Governance, Risk, and Compliance: Strengthened focus on risk management, legal issues, and compliance frameworks.

- Incident Response & Forensics: Expanded scenarios for incident response and digital forensics, equipping professionals to handle breaches effectively.

- Secure Development: More coverage on secure coding practices and DevSecOps, integrating security throughout the software lifecycle.

This update ensures that Security+ certified professionals stay current with today’s cybersecurity challenges. Ready to take your skills to the next level? Dive into the new CompTIA Security+ SY0-701!

Are you looking to break into the exciting field of Cybersecurity? Join our 5-day CompTIA Security+ Boot camp Training and build your Cybersecurity knowledge and skills.

Posted by Ajmal Ward & filed under CompTIA Security+, MICROSOFT MTA SECURITY.

Why Spam is a Major Security Concern and How to Protect Yourself

Spam emails are a persistent nuisance that clog up our inboxes and waste our time, but they are much more than just an annoyance. Spam is a significant security concern that can pose a threat to individuals and organizations alike. In this blog, we will explore why spam is a security concern and provide some tips on how to protect yourself from spam.

How to Protect Yourself from Spam?

Use Spam Filters: Most email services and clients offer spam filters that can be used to automatically filter out unwanted and potentially dangerous emails. Make sure that your email provider has a robust spam filter and that you have it turned on.

Don’t Click on Links or Attachments: Be cautious when opening emails from unknown or suspicious senders, and avoid clicking on links or downloading attachments. If you are unsure about the authenticity of an email, verify it with the sender before opening any links or attachments.

Use Anti-Virus Software: Install reputable anti-virus software on your device and keep it updated to protect against malware and other threats.

Be Careful with Personal Information: Do not share personal information such as passwords or financial information in response to unsolicited emails or requests. Always verify the legitimacy of the request before sharing any personal information.

Educate Yourself: Stay informed about the latest spam and phishing trends and tactics, and educate yourself on how to identify and avoid them.

In conclusion, spam is a serious security concern that should not be taken lightly. By following these best practices and staying vigilant, you can protect yourself from spam and the threats it poses. Remember, when it comes to spam, prevention is always better than cure.

Posted by Ajmal Ward & filed under CompTIA Security+, MICROSOFT MTA SECURITY.

Understanding Trust in Information Security

As technology continues to evolve and the reliance on digital systems and networks increases, trust has become a crucial aspect of information security. Establishing and maintaining trust is essential in protecting sensitive data, ensuring the integrity of systems, and mitigating security risks. In this comprehensive guide, we will delve into the concept of trust in the context of CompTIA Security+ certification and explore its key components and implications.

Authentication: The Foundation of Trust

At the heart of trust in information security is authentication, the process of verifying the identity of a user, device, or system. Authentication methods can include something a user knows, something a user has, or something a user is. We will explore various authentication methods, such as passwords, smart cards, and biometric recognition, and discuss best practices for implementing strong authentication mechanisms.

Authorization: Determining Access Rights

Once a user, device, or system has been authenticated, authorization comes into play. Authorization determines what actions or resources an authenticated entity is allowed to access. We will delve into the concept of authorization, including role-based access control (RBAC) and other authorization models, and discuss how to implement effective authorization mechanisms to prevent unauthorized access and data breaches.

Trust Models: Establishing Trust Relationships

Trust models are frameworks used to establish and manage trust between different entities in a system or network. We will explore common trust models, such as single sign-on (SSO) frameworks, multi-factor authentication (MFA) systems, and public key infrastructure (PKI) implementations. We will discuss their strengths, weaknesses, and best practices for implementation to ensure secure and trusted interactions between entities.

Trust Boundaries: Managing Interfaces

Trust boundaries are the points or interfaces where different levels of trust meet or interact. Managing trust boundaries is crucial in preventing security breaches and ensuring the integrity of systems and networks. We will discuss how to identify and manage trust boundaries, including considerations for physical and logical boundaries, and best practices for securing these critical points of interaction.

Trustworthiness: Ensuring Reliability and Security

Trustworthiness is the overall reliability, integrity, and security of a system or network. It involves implementing appropriate security controls, maintaining system updates and patches, and following best practices for securing data, systems, and networks. We will explore the concept of trustworthiness and discuss how to implement measures to ensure the trustworthiness of information systems and networks.

Conclusion: Trust as a Pillar of Information Security

In conclusion, trust is a foundational concept in information security and plays a critical role in protecting sensitive data, systems, and networks. Understanding and managing trust is essential for information security professionals and is a key topic covered in the CompTIA Security+ certification exam. By comprehensively understanding the components of trust, including authentication, authorization, trust models, trust boundaries, and trustworthiness, information security practitioners can effectively mitigate security risks and safeguard valuable information assets.

Whether you are a security professional preparing for the Security+ certification exam or an IT practitioner looking to enhance your knowledge of information security, this comprehensive guide on understanding trust in information security will provide valuable insights and practical recommendations for establishing and maintaining trust in today’s complex digital landscape. Trust is a critical pillar of information security, and mastering its concepts is essential for protecting against security threats and ensuring the confidentiality, integrity, and availability of information and resources.

Posted by Ajmal Ward & filed under CompTIA Security+, MICROSOFT MTA SECURITY.

Identity fraud

Identity fraud, also known as identity theft, is a serious crime that can have devastating consequences for individuals and businesses alike. With the increasing digitization of our lives and the proliferation of personal information online, the risk of falling victim to identity fraud is higher than ever. In this blog, we will delve into what identity fraud is, the risks associated with it, and steps you can take to protect yourself.

What is Identity Fraud?

Identity fraud occurs when someone steals your personal information and uses it without your consent to commit fraudulent activities, such as making unauthorized purchases, opening bank accounts or credit cards, filing false tax returns, or even committing crimes in your name. Personal information that can be used for identity fraud includes your name, address, social security number, date of birth, phone number, email address, financial account numbers, and more.

The Risks of Identity Fraud

The risks of identity fraud are numerous and can have serious consequences for victims. Some of the risks associated with identity fraud include:

- Financial Losses: Identity thieves can drain your bank accounts, make fraudulent purchases using your credit cards, and even open new credit accounts in your name, leaving you with significant financial losses and damage to your credit score.

- Legal Troubles: If an identity thief commits crimes using your personal information, you may find yourself facing legal troubles, including being wrongly accused of criminal activities.

- Emotional Distress: Discovering that your personal information has been stolen and misused can be emotionally distressing, causing anxiety, stress, and a sense of violation.

- Time and Effort to Resolve: Resolving the aftermath of identity fraud can be time-consuming and require significant effort, including contacting financial institutions, credit bureaus, and law enforcement agencies, filling out paperwork, and dealing with the bureaucratic process.

Protecting Yourself from Identity Fraud

While identity fraud can be a serious threat, there are steps you can take to protect yourself and reduce your risk of falling victim to this crime. Here are some important measures you can implement:

- Safeguard Your Personal Information: Be cautious about sharing your personal information online, and only provide it to trusted sources. Avoid sharing sensitive information on social media platforms, and be cautious about the information you share over the phone or via email.

- Use Strong and Unique Passwords: Use strong, unique passwords for all your online accounts, and avoid using common passwords or reusing passwords across different accounts. Consider using a password manager to help you generate and store complex passwords securely.

- Monitor Your Financial Accounts: Regularly monitor your bank and credit card accounts for any unauthorized transactions or suspicious activity. Report any discrepancies immediately to your financial institution.

- Be Cautious of Phishing Attempts: Be wary of emails, phone calls, or text messages that request your personal information or financial details. Be cautious of clicking on links or downloading attachments from unknown sources, and verify the legitimacy of any communication before providing any sensitive information.

- Secure Your Devices: Keep your devices, including your computer, smartphone, and tablet, secure with up-to-date antivirus software, firewalls, and security patches. Avoid using public Wi-Fi networks for sensitive transactions and be cautious of downloading apps or software from unknown sources.

- Check Your Credit Reports: Regularly review your credit reports from the major credit bureaus (Equifax, Experian, and TransUnion) to check for any suspicious activity or inaccuracies. You are entitled to a free credit report from each bureau every year.

- Consider Identity Theft Protection Services: Consider enrolling in an identity theft protection service that offers monitoring, alerts, and assistance in case of identity fraud. Do your research and choose a reputable service with good reviews.

Conclusion

In conclusion, identity fraud is a serious crime that can have severe consequences for individuals and businesses. With the increasing digital landscape and the proliferation of personal information online, it’s crucial to take steps to protect yourself from falling victim to identity fraud. By safeguarding your personal information, using strong and unique passwords, monitoring your financial accounts, being cautious of phishing attempts, securing your devices, checking your credit reports, and considering identity theft protection services, you can significantly reduce your risk of identity fraud. Stay vigilant, be cautious, and take proactive measures to protect your personal information and financial well-being. Remember, prevention is key when it comes to identity fraud, and taking action now can save you from potential devastating consequences in the future.

Posted by Ajmal Ward & filed under CompTIA Security+, MICROSOFT MTA SECURITY.

Whaling

Phishing attacks, a form of cyber attack where malicious actors trick individuals into revealing sensitive information, have become increasingly sophisticated in recent years. One type of phishing attack that has gained prominence is “whaling,” which targets high-level executives and individuals with access to valuable data or funds. Whaling attacks are highly targeted and personalized, making them difficult to detect and defend against. In this blog, we will explore the concept of whaling, the risks it poses to organizations, and how the implementation of security measures, such as Security+, can help protect against this advanced form of phishing.

Understanding Whaling:

Whaling, also known as CEO fraud or business email compromise (BEC), is a type of phishing attack that focuses on high-profile individuals, such as CEOs, CFOs, and other executives. Unlike traditional phishing attacks, which may cast a wide net and target a large number of individuals, whaling attacks are carefully crafted and highly targeted. Cybercriminals conduct thorough research on their victims, gathering information from publicly available sources, social media, and other online platforms to create a convincing facade. They then use this information to send fraudulent emails that appear to be from a trusted source, often posing as a high-ranking executive or a trusted business partner, in order to trick the victim into taking a specific action, such as transferring funds or revealing sensitive information.

Risks of Whaling:

Whaling attacks pose significant risks to organizations, as they can result in financial losses, reputational damage, and data breaches. High-level executives and individuals with access to critical data or financial resources are prime targets for whaling attacks, as their actions can have a significant impact on the organization. Whaling attacks often exploit the human element of cybersecurity, relying on social engineering techniques to manipulate victims into taking actions that may compromise security. The personalized and convincing nature of whaling attacks makes them difficult to detect using traditional security measures, and organizations need to implement specialized security measures to effectively mitigate the risks.

Whaling Security+:

Security+ is a well-known and widely used certification offered by CompTIA, which focuses on information security and validates the skills and knowledge required to secure IT systems and networks. Implementing Security+ best practices can help organizations protect against whaling attacks by enhancing email security, strengthening authentication methods, and providing employee training on identifying and responding to whaling attempts. Some key Security+ practices that can be applied to mitigate whaling risks include:

- Email Authentication: Implementing technologies such as Domain-based Message Authentication, Reporting, and Conformance (DMARC), Sender Policy Framework (SPF), and DomainKeys Identified Mail (DKIM) can help verify the authenticity of incoming emails and detect spoofed or fraudulent emails.

- Employee Training: Providing regular and comprehensive training to employees, especially high-level executives and individuals with access to sensitive data, on identifying and responding to whaling attempts can help increase awareness and reduce the likelihood of falling victim to such attacks.

- Access Control: Implementing strong access control measures, such as multi-factor authentication (MFA), to limit access to critical systems and data can help prevent unauthorized access in case of a successful whaling attack.

- Incident Response: Establishing a robust incident response plan that includes procedures for detecting, reporting, and responding to whaling attacks can help organizations quickly mitigate the impact of a successful attack and prevent further damage.

Conclusion

Whaling attacks pose significant risks to organizations, and it is crucial to implement effective security measures to protect against this advanced form of phishing. Security+, with its focus on information security, can provide organizations with the necessary skills and knowledge to strengthen their defenses against whaling attacks. By implementing email authentication, providing employee training, enforcing access controls, and establishing incident response plans

Posted by Ajmal Ward & filed under CompTIA Security+, MICROSOFT MTA SECURITY.

FREE ISC2 Certified in Cybersecurity Exam Voucher

Did you know that you can use this FREE exam codes to register for ISC2 Certified in Cybersecurity℠ – CC

See www.asmed.com/s1

Are you passionate about technology and interested in a career that offers limitless opportunities? You don’t need prior experience to start your journey in cybersecurity—just the drive to learn and succeed. With the global demand for cybersecurity professionals at an all-time high, now is the perfect time to explore this exciting and rewarding field.

Why Cybersecurity?

Cybersecurity is more than just a job; it’s a mission to protect data, systems, and people from digital threats. As technology evolves, so does the need for skilled professionals who can safeguard our interconnected world. By joining the cybersecurity workforce, you’re not only securing your future but also contributing to the safety and security of countless organizations and individuals worldwide.

ISC2's Commitment to Closing the Cybersecurity Workforce Gap

In an effort to close the cybersecurity workforce gap and bring more diversity into the field, ISC2 is offering a groundbreaking opportunity: FREE Certified in Cybersecurity (CC) Online Self-Paced Training and exams for one million people. This initiative is designed to empower individuals from all backgrounds to kickstart their cybersecurity careers and become part of the world’s largest association of certified cybersecurity professionals.

How to Start Your Journey

Participating in the One Million Certified in Cybersecurity program is simple. Here’s how you can get started:

- Create an Account: If you don’t already have an ISC2 account, you’ll need to create one. If you’re already a member, simply sign in.

- Complete Your Application: Fill out the ISC2 Candidate application form and select “Certified in Cybersecurity” as your certification of interest.

- Access Your Training: Once your application is complete, you’ll become an ISC2 Candidate. This status gives you access to the Official ISC2 Certified in Cybersecurity Online Self-Paced Training and allows you to register for the free certification exam. Access all your resources on the Candidate Benefits page.

- Take the Exam: After completing the training, take the certification exam. Upon passing, you’ll need to complete an application form and pay a U.S. $50 Annual Maintenance Fee (AMF).

- Become Certified: Once you pass the exam and complete all the required steps, you’ll become a certified member of ISC2. As a member, you’ll join the world’s largest association of certified cybersecurity professionals and gain access to a wealth of professional development resources to support your career growth.

Ready to Get Started?

Don’t miss this unique opportunity to break into the cybersecurity field with the support of ISC2. Whether you’re looking to make a career change or advance your current skills, this program provides everything you need to succeed.

Posted by Ajmal Ward & filed under CompTIA Security+, MICROSOFT MTA SECURITY.

How to prepare for CompTIA Network + Job?

Posted by Ajmal Ward & filed under Amazon AWS.

FREE AWS Exam Retake codes

Looking to advance your career and validate your skills? Get AWS Certified and stand out from other professionals with a recognized credential. Boost your confidence with an extra exam retake opportunity (AWPR9A223835), available only with Pearson VUE. Take advantage of this limited-time promotion to schedule and complete your exam between March 15 and May 31, 2023. If you need it, you’ll receive a free retake when you schedule and complete your exam on or before August 1, 2023

To get started:

Click on image or follow the link end of the page:

- Login or create an AWS training account

- Register for your exam

- Apply the promo code during checkout to qualify for a free exam retake

- Validate your skills and show your professional network that you stand out from other professionals.

Follow the link: https://home.pearsonvue.com/AWS/free-retake

Posted by Ajmal Ward & filed under CompTIA Network+, MICROSOFT MTA NETWORKING.

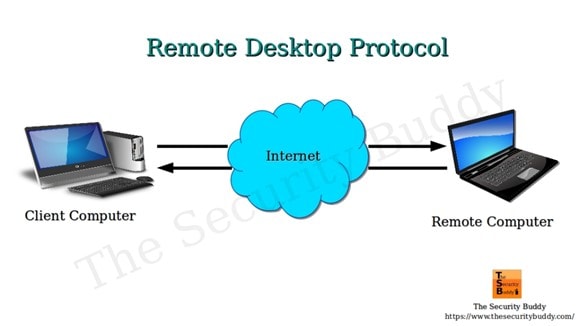

Remote Desktop Protocol (RDP)

RDP is a Microsoft-designed technology that allows two computers to share a GUI using a network connection.

RDP is a proprietary technology initially built by Microsoft that allows two computers to exchange a graphical user interface (GUI) using a standardized network connection. This article explains the meaning of RDP, how it works, its benefits, and the challenges to consider.

What Is Remote Desktop Protocol?

Remote work has existed for quite a while but has recently been brought to the limelight. The COVID-19 pandemic highlighted how much employees could complete from the comfort of their homes. But it also showed the limitations of remote work and its risk for the business. One of those limitations and risks is the need to duplicate the office environment at home, including sensitive files, documents, subscribed applications, etc.

An employee that works with sensitive information might be limited from working remotely even when there is little alternative. This is where the Remote Desktop Protocol (RDP) comes into the scene.

Remote Desktop Protocol is a safe protocol for communication between computer networks. It is an exclusive protocol built by Microsoft that furnishes the user on one desktop with a graphical user interface that they can use to connect with another computer over a network connection directly. For this to work, the user must have the RDP software installed on his computer from which he accesses the other computer running the RDP server.

The Remote Desktop Protocol connection is a tool that allows users to connect to another windows or PC in another location over the internet. The user located far away will be able to log in to the home PC, view the desktop and access the files stored in it, and use the peripheral devices like the mouse and keyboard to control the office PC, just as though they were in front of it.

The Remote Desktop Protocol is not just a tool for remote workers to access their office desktops; it is also invaluable to network admins as they can diagnose and fix non-structural system malfunction without being physically present. Remote employees, those in transit, at a conference, support technicians, and network administrators can use RDP for regular maintenance.

Microsoft developed RDP, but it can link different types of computers. The client that is the PC, the user is logged into can run on multiple operating systems like Windows, macOS, Unix, and Android. At the same time, the server is built for specific operating systems, majorly Windows.

How Does Remote Desktop Protocol Work?

The working principle of the RDP is quite simple and uncomplicated. Like other Remote Desktop software, RDP gives you remote control over another system. However, RDP is the most common protocol used for this purpose.

How does RDP work?

Anything you will control remotely, be it an object or, in this case, a computer system, must be able to receive some signal. Take, for instance, drones. For a drone to move in a direction or change course, it must receive radio signals from the drone controller in the hands of the pilot. Remote Desktop Protocol works by a similar yet different mechanism, but first, we must understand what the client and server represent in the RDP network.

- Server: The server, otherwise known as the host, is the computer you want to connect to and is accessible from any location. It requires the RDP software to be installed on it.

- Client: The client is the remote computer operated by the user who has the authorization to connect to and control the host desktop remotely.

When using Remote Desktop Protocol, signals are sent over the internet rather than radio waves. These signals include input signals from the keyboard and mouse and output display signals from the server. RDP opens a particular channel through the Transmission Control Protocol (TCP or TCP/IP) and sends the information packets in an encrypted format to improve the network’s security. Currently, RDP uses the network port 3389 to transfer all data related to Remote Desktop access.

Before sending the information to the host, the transport driver is in charge of packaging the data. From there, Microsoft communications services direct it to the Remote Desktop ppl to COL prepared channel where the operating system encrypts it and is transmitted.

Encrypting and transmitting data to the host computer over the internet and receiving the desktop display at every point can cause delays in use. Therefore, RDP requires fast internet services to adequately handle the workload while creating a pleasant experience for the user.

When using Remote Desktop access, it is possible to add extra transport drivers for other network protocols depending on peripheral users’ demand to connect to the host computer. This level of independence in the TCP/zip stack improves the performance of RDP and makes it an extensible network.

Properties of the Remote Desktop Protocol

The working principle of RDP is reflected in its properties. These include smart card verification, ability to display on several screens, reduced bandwidth, 128-bit encryption for data sent from keyboard and mouse using the RC4 encryption, sending audio from the host to the client computer, sharing clips between computers, using local printers to print out documents from remote information, and so on.

With RDP, up to 64,000 different channels can be used to transmit data, and with the ability to reduce bandwidth, data transfer can still occur with sub-optimal network conditions. It is essential to know that some of these features are, however, only accessible in the enhanced sessions. With this unique set of properties, Remote Desktop Protocol has three primary use cases:

- They are used by individuals for remote desktops to their office PC when working from home, working part-time, or even their home PC when in transit or on holidays.

- It enables remote troubleshooting by a technician or a friend helping another person.

- Network admins can use RDP for remote administration ofIT infrastructure.

Benefits of Remote Desktop Protocol

Using the RDP protocol, one can gain the following benefits:

1. Makes device management easier

Managing a company’s or organization’s computer network is not a very easy job. It has challenges, and troubleshooting technical problems is just part of it. IT administrations must ensure that devices comply with company policy while remaining accessible to existing and potential new users or employees.

Sometimes, computers malfunction, either due to hardware or software failure. Other times remote users accessing the host server may unintentionally make settings that affect operation. If the server desktop is in a location that is not easily accessible, one can still fix technical issues from a remote location.

IT admins also have to ensure that installed software remains updated. With Remote Desktop Protocol, the job of the IT admin is considerably less challenging and not restricted to their presence in the office building. The admin can remotely control, make changes in the setting, control permission, limit access, etc., all in real-time.

2. Simplifies data access and management

One intriguing benefit of RDP is the ease at which data can be accessed and managed. Remote Desktop Protocol does not require complex instructions and procedures to access data from a computer system or database.

Users can do so from even a phone with just log-in details. The human mind is only so limited in the information it can store after it has left the work environment. Opportunities may then arise where it is necessary to recall some vital data. Remote Desktop Protocol makes this not only possible but easy.

The system can also manage data remotely, not limited to data access. Managers or human resources can monitor the information being entered into the database at leisure, ensure financial records are accurate and in sync with production or sales, and watch the working hours of workers covertly.

3. Supports remote working

In current times, it is not unheard of to find a company with more than 70% of its staff working from home. It was usually seen among software developers but now extends to all workers, like content creators, personal assistants, research assistants, marketers, product designers, and so on. Some workers may visit the office building weekly or on random days. RDP makes it easier for a company to have remote employees and maintain high excellence and efficiency.

4. Enforces maximum security

Remote Desktop Protocol caters to network security in several ways. With RDP, there is an addition of professionals in charge of maintaining the integrity of the server. This includes ensuring protection against the latest security threats. More so, there is constant data encryption for every information sent across the network.

This protects against hackers that may try to access vital data as it’s sent over the internet. With Remote Desktop Protocol, data loss is safeguarded against. Not just because of multiple screen sharing but also because one can easily recover files due to backup. Lastly, sensitive information containing financial records or confidential clients can be marked off and restricted from being viewed by just any remote employee.

5. Enables cost-savings

Another benefit of RDP is its cost-effectiveness. It saves money for any company and individual employing the technology. For devices that have Remote Desktop Protocol enabled, they can be easily repaired by technicians from afar. This alone reduces the maintenance cost of operating a device.

A company that invests in Remote Desktop Protocol can expect a healthy return on investment. Having more work done remotely and perhaps some full-time remote staff saves time and energy usually expended on transit. This maximizes productivity and increases the ROI of the company.

6. Works with multiple operating systems

One challenge encountered again and again with computer systems is operating systems compatibility. Many software programs are developed every day, yet the majority are selective on the type of device they can effectively run on. Remote Desktop Protocol may not be compatible with every operating system in the book, but it goes a long way. The RDP server previously was limited to a Windows-based system but now includes macOS. The clients can access the server from multiple servers, including Android and iOS mobile phones.

7. Increases productivity

Remote Desktop Protocol can go a long way to increase the productivity of any enterprise that uses the technology, from large multi corporations to small businesses and startups. The work environment is one of the primary factors that influence an employee’s productivity. Employees outfitted with the latest technological advancement and provisions like RDP will enjoy exploring such tools. Also, someone who is not confined to the four walls of an office or the three walls of a cubicle, as the case may be, is more creative and expressive in carrying out tasks.

Some ways RDP increases productivity include:

• Every team member uses the best operating system with high performance irrespective of the type of computer hardware they may have in the office.

• Field employees can have the same level of access to data, similar to their colleagues, and can also attribute information directly to the company’s database.

• Remote users can easily access company files stored on the server hardware without much expertise. This is in contrast to cloud storage which may prove challenging to navigate.

• Multiple applications on the host server are made available for peripheral users to improve their ability to work on projects.

• Employees can have a say in their working environment which ultimately improves job outlook, job satisfaction, and productivity.

Challenges of Remote Desktop Protocol

Remote Desktop Protocol is not without a few challenges. These include;

- The risk of downtime:RDP is a system that inadvertently puts most of its users at risk if there is disruption from a significant source. This means that downtimes can be abrupt when they occur, and the implication is far-reaching across every RDP client in their various locations.

- Multiple causes of interruption:Downtime could result from a break in consistency, system failure, or network services from the company providing the service. Downtime can be from the host computer; an event such as hardware theft or destruction can cause a backlash on other users.

- Network dependency:Similar to the above mentioned, the RDP framework will work similarly as long as all outsider PCs have solid and dependable web associations accessible to them. If not, the system is entirely out of reach. Further, remote employees can have latency issues if they have a slow internet connection.

- Bottlenecks:Depending on the host system’s power and how many are trying to access it simultaneously, blockages can be caused and reduce performance.

- The need for expert knowledge:The RDP manager must have complete information regarding the matter and be promptly contactable if and when any issues ought to happen during ordinary working hours. Without the vital assistance on reserve to go to in case of a framework blackout, the outcomes could be critical.

- Increased security vulnerabilities:Remote access is a double-edged sword regarding system security. Although it comes with data encryption, access controls, and activity logging, it introduces additional security vulnerabilities that could be used as attack points. Security vulnerabilities, such as susceptibility to hash attacks and computer worms, are not ideal for sustained use over time.

You’ll notice that, for instance, it’s challenging to keep tabs on everyone accessing your system remotely. You can’t physically authenticate all the users. That makes it easy for attackers to infiltrate the system using genuine accounts and then leave unnoticed. In other cases, users leverage compromised VPN services, which hackers then manage to take advantage of to gain unauthorized access.

Despite these challenges, RDP can be useful for administering remote work management and access, especially for companies using an on-premise IT infrastructure.

Takeaway

Remote desktop protocol has become the standard for sharing desktops and other GUI interfaces over networked Microsoft systems. However, enterprises should keep in mind that heavy bandwidth utilization may impact performance. Besides bandwidth strain and security risks, remote desktop protocol or RDP has a few cons. This makes it a compelling solution in the era of remote and hybrid working.

Posted by Ajmal Ward & filed under CompTIA Network+, MICROSOFT MTA NETWORKING.

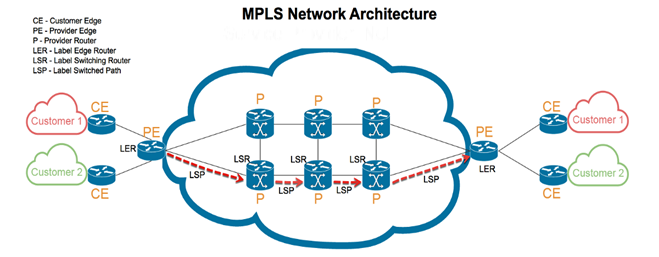

Multi-Protocol Label Switching (MPLS)

MPLS – short for Multi-Protocol Label Switching – is a now-aging network routing system that transfers data between nodes using labels that denote predetermined pathways instead of network addresses that refer to the nodes themselves. This article explains how MPLS works, its types, and the core architecture.

What Is MPLS (Multi-Protocol Label Switching)?

MPLS – short for Multi-Protocol Label Switching – is defined as a now-aging type of network routing system that transfers data between nodes using labels that denote predetermined pathways instead of network addresses that refer to the nodes themselves.

Since its inception in the 1960s, the internet has evolved in more ways than was ever imagined. Amazingly, the internet is still changing, bringing us closer and closer to newer technologies yet undiscovered. Data transfer over the internet has as well evolved. Data transfer is perhaps the most critical function of the internet in connecting millions of computers worldwide.

Traditionally, the standard Internet Protocol (IP) and the Transfer Control Protocol (TCP) have regulated how data packets are moved from one point to the other. In this protocol, each router must make an independent decision about every tiny bit of data packet and determine where the network should send it. Multi-Protocol Label Switching was created to circumvent this bottleneck in data transfer across the internet.

Understanding multi-protocol label switching

Multi-Protocol Label Switching or MPLS is a technique used to route and direct traffic in communication technology that uses labels in place of addresses to handle data flow from one router to the other. Ideally, these addresses identify endpoints for each data packet. However, labels do not focus on the destination but instead on routes and pathways that have already been established.

MPLS is a networking technology that directs traffic consisting of data packets along networking routes but through the shortest path described on the labels.

Multi-Protocol Label Switching is one of the Internet Protocol (IP) routing techniques that can work on numerous packets covering more than one network protocol and, as such, is referred to as a multi-Protocol system. Multi-Protocol Label Switching, therefore, supports technologies such as the Asynchronous Transport Mode (ATM), Frame Relay, DSL, etc.

The MPLS transfer protocol primarily controls the forwarding of packets over a private Wide Area Network (WAN), for example, a company with several remote outlets or branches connected to the main center. It resolves the issue of slow data transfer and downtime when using the internet but remains a scalable and protocol-independent technology.

When comparing Multi-Protocol Label Switching with other data transfer methods, MPLS is a technology that increases the speed at which data flows across a network. This is simply because the need for looking up complex routing tables at every node has been eliminated. Previously, each node in the local internet mesh served as a router determining the path for incoming packets by searching through complex tables.

Multi-Protocol Label Switching was initially released in 2001 by the internet engineering task force (IETF). It released both the architecture of the technology and its label stack encoding. MPLS performed similarly to the ATM switch as a faster routing technique than the conventional method. MPLS, however, did not have the setbacks ATM had. MPLS also has the advantage of out-of-band control and maintenance of traffic engineering.

How Does MPLS Work?

Multi-Protocol Label Switching works by addressing incoming packets to their destination based on the information written on their labels. It does not try to guess the address but uses labels to find an established bandwidth for the data packet.

MPLS works in a manner that is slightly similar to IP routing techniques. When a regular router receives an incoming data packet, the only information on the packet is the destination IP address without further details on the routes or manner in which the network should transport the packet. In MPLS, the label contains information about the routes the data packet should take. This eliminates the cumulative delay by routers in ‘thinking’ of the best possible course.

When a data packet enters a Multi-Protocol Label Switching network, it is given a specific forwarding Class of Service (CoS), also called Forwarding Equivalence Class (FEC). The class of service forms a part of the label, showing what type of information is contained in the data packet, be it real-time data like VoIP or emails. With this label, the routers can reserve the fastest paths with the least latency to highly sensitive real-time information like Voice over Internet Protocol (VoIP) and video conferencing.

When a data packet enters an MPLS network, the entry node is called a Label Edge Router or ingress node. The class of service is then added, specifying the type of information in the packet and its priority level. In MPLS, there are predetermined, unidirectional pathways linking routers across the network; the Label Switched Path (LSP). Networks can only forward data packets after the LSP has been established and the ingress node has encapsulated the packet in the LSP.

Other nodes within the network are called the label switch routers, which are transit nodes ensuring continuous data flow. The information in the packet label guides the transit nodes, and stops are minimized. After passing through the ingress nodes and transmit nodes, the last router is called an egress node, and it removes the label so the packet address can be read and delivered to the destination.

The MPLS uses a networking protocol that is somewhat a combination of Layer 2 (data link layer) and Layer 3 (IP layer) of the Open Systems Interconnection (OSI) model. This is why MPLS is generally considered a layer 2.5 networking protocol, having features from both for data transfer across a network. Its functionality is enabled by the following

Components of the MPLS label:

- Label/label value: It is a 20-bit long field containing the information routers read in directing the data packet.

- Traffic class field: This is a 3-bit long part of the label used to set the Quality of Service and explicit congestion notification.

- Bottom of the stack: Labels can be stacked on top of each other, and the topmost label is in charge of delivery and is replaced by other labels underneath it until the transfer is complete. The last label in an MPLS header is referred to as the bottom of the stack.

- Time to Live (TTL): It is an 8-bit long label that decreases in value each time the packet hops and therefore limits the packet’s lifespan.

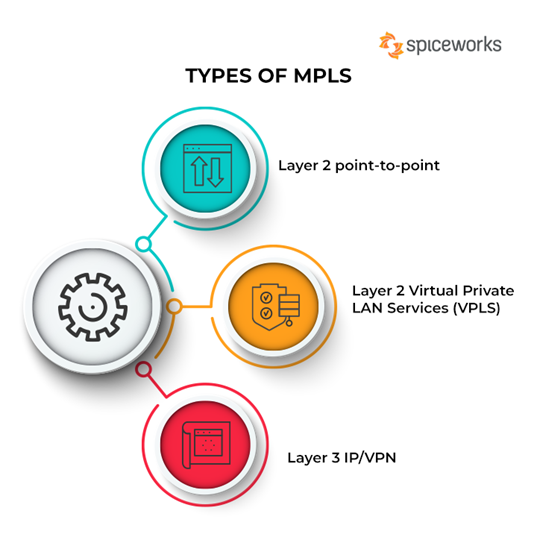

Types of MPLS

MPLS technology can be of three types. These are:

Types of MPLS

1. Layer 2 point-to-point

Layer 2 point-to-point is a type of MPLS suitable for companies that need high bandwidth connections connecting a few locations together while maintaining cost-effectiveness. Examples of practical use of layer 2 point-to-point include several network operations with their primary network infrastructure built using Ethernet and layer 2.

Layer 2 point-to-point is an excellent alternative to high bandwidth leased lines. It is not bound by internet protocol and can send data running in the Local Area Network (LAN) directly to the WAN without needing routers to change the packets to be compatible with layer 3 of the OSI model. Here are its pros and cons:

- Pros: With this type of MPLS, the need to manage complex routing tables has been eliminated. Also, it is cost-effective, as WAN connections can be directly linked with layer 2 switches, eliminating the need for expensive routers.

- Cons: It is challenging to get circuits of less than 10Mbps in bandwidth as providers only sell high bandwidth circuits. Further, it does not support point-to-multipoint connections.

2. Layer 2 Virtual Private LAN Services (VPLS)

Layer 2 Virtual Private LAN Services (also known as Layer 2 VPLS) is now becoming more sought after for its ability to provide Ethernet services. Layer 2 VPLS combines the Multi-Protocol Label Switching with the Ethernet and extends the benefits to end customers and carriers.

For over 20 years, LAN has predominantly used Ethernet switching for connectivity, while the carrier network relies on internet protocol routing. Internet protocol not only provides internet access but also provides virtual private network (VPN) access.

Ethernet, however, has continued to be widely used over various bandwidths because it requires little technical knowledge and remains more affordable. Ethernet is now the infrastructure of choice in both LAN and WAN. Virtual Private LAN Services (VPLS) is an ideal protocol that can provide its users with Multi-Protocol Label Switching and Ethernet, therefore diverting all the traffic in Layer 2 directly to the wide area network. In addition, VPLS remains simple, easy, affordable, and highly scalable. Here are its pros and cons:

- Pros: It provides a transparent interface that does not require investment in hardware such as routers to upgrade bandwidth. Traffic is labeled with a MAC address as opposed to an IP address, and like all switched networks, Layer 2 VPLS offers lower latency periods than a router network will offer. Configuration and deployment are straightforward, even for newly added sites.

- Cons: Layer 2 VPLS is still being used only in some parts of the world and has not attained global reach. Therefore this limits the applicability of any feature. The absence of routers as part of the hardware infrastructure places the layer 2 VPLS at higher risk of storm damage. Monitoring is complex due to a lack of visibility from the providers.

3. Layer 3 IP/VPN

Layer 3 IP/VPN is a type of MPLS network most suitable for large enterprises covering multiple branches over a vast land mass. This includes corporations with offices spread across the globe, industries located in more than one country, etc.

Layer 3 IP/VPN is a service that is naturally a continuation of the ATM and legacy frame relay models. Layer 3 IP/VPN transports data packets based on labels attached as the packets enter the ingress nodes. Therefore, it is highly suitable for companies that are merging for easy scalability and rapid deployment.