“`html

Top 5 CompTIA Certifications to Advance Your IT Career in 2025

The Information Technology sector is growing at an unprecedented rate, and

CompTIA certifications have become a gold standard for IT professionals

looking to validate their skills and advance their careers. As we approach 2025,

employers are increasingly seeking candidates who can demonstrate up-to-date

expertise through recognized certifications. In this guide, we’ll explore the

top five CompTIA certifications that can help you stand out in the competitive

IT job market and position yourself for success.

Why Choose CompTIA Certifications?

CompTIA (Computing Technology Industry Association) certifications are

vendor-neutral credentials respected globally. These certifications

validate essential IT skills, from foundational knowledge to specialized expertise

in cybersecurity, networking, and cloud computing. Here’s why earning a CompTIA certification

can be a smart move:

- Global Recognition: Trusted by employers around the world.

- Career Advancement: Opens doors to higher-paying IT roles.

- Vendor-Neutral: Skills apply across products and platforms.

- Up-to-date Content: Certifications are continually revised to match industry needs.

- Accessible for All Levels: Certifications span from entry-level to expert.

The Top 5 CompTIA Certifications in 2025

Let’s review the most sought-after CompTIA certifications that can propel your IT career forward in 2025.

1. CompTIA A+

CompTIA A+ serves as the ideal starting point for anyone beginning their IT journey.

Renowned as an entry-level certificate, A+ covers foundational IT skills demanded by support specialists and help desk technicians.

- Key Takeaways: Installing, configuring, and managing PCs and mobile devices, basic networking, and troubleshooting.

- Exam Codes (2024-2025): 220-1101 & 220-1102

- Job Roles: IT Support Specialist, Field Service Technician, Desktop Support Analyst

By earning the A+ credential, you demonstrate your ability to support and maintain critical IT infrastructures,

making you an invaluable asset to any tech-driven organization.

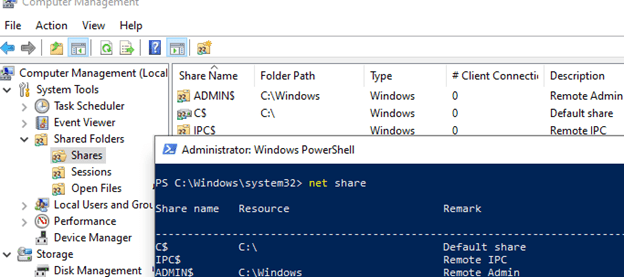

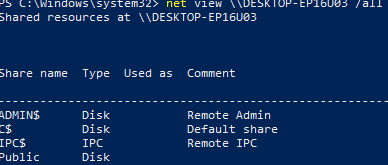

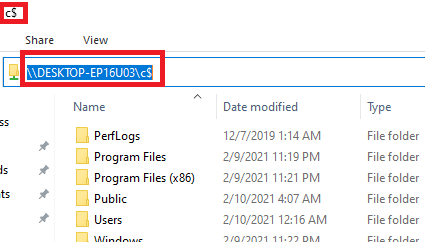

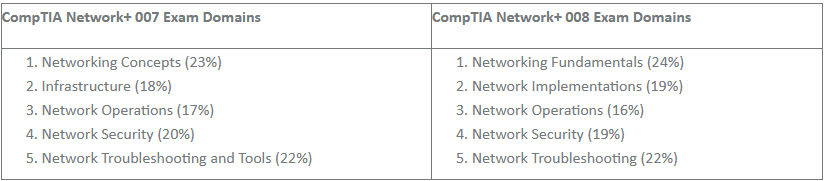

2. CompTIA Network+

As enterprise networks become more complex, the demand for skilled network professionals continues to rise.

CompTIA Network+ validates your ability to design, manage, and troubleshoot both wired and wireless networks.

- Key Takeaways: Network design, implementation, configuration, management, and security principles.

- Exam Code: N10-008 (subject to update in late 2025)

- Job Roles: Network Administrator, Network Technician, System Engineer

This certification signals that you have the practical knowledge required to keep businesses connected and secure.

3. CompTIA Security+

Cybersecurity is the cornerstone of IT defense, especially as threats become more sophisticated.

CompTIA Security+ is globally acknowledged as a foundational security certification for IT professionals.

- Key Takeaways: Threat management, cryptography, access control, risk identification, and mitigation.

- Exam Code: SY0-701

- Job Roles: Security Administrator, Systems Administrator, Security Specialist

Holding the Security+ credential assures employers that you understand core security best practices and principles, meeting many compliance and regulatory requirements.

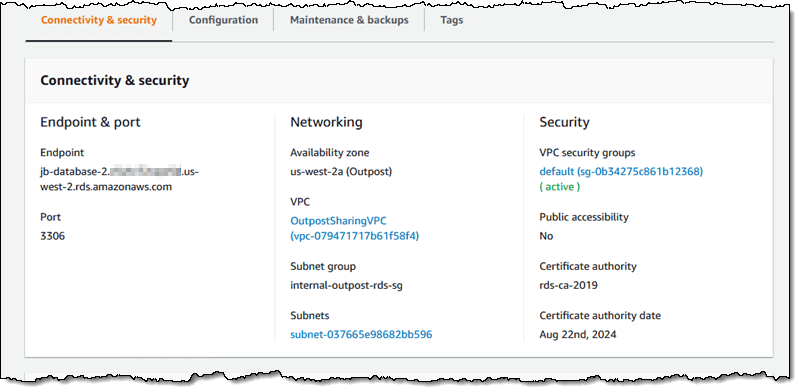

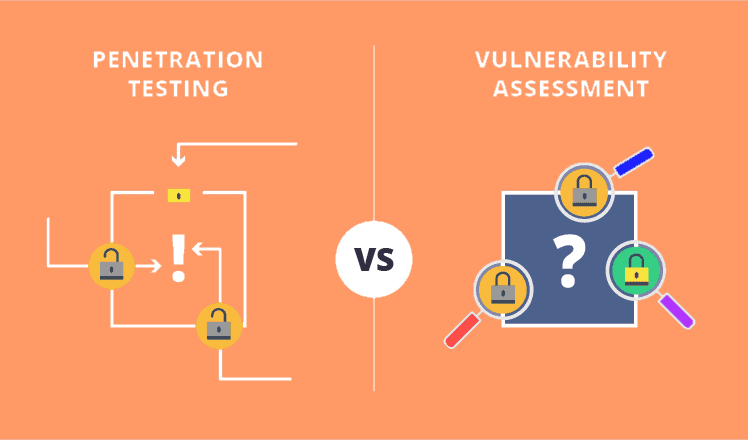

4. CompTIA CySA+ (Cybersecurity Analyst)

For professionals ready to go beyond foundational cybersecurity, CompTIA CySA+ offers practical, up-to-date knowledge in security analytics and incident response.

- Key Takeaways: Threat detection, vulnerability assessment, security monitoring & automation, and digital forensics.

- Exam Code: CS0-003

- Job Roles: Cybersecurity Analyst, Threat Intelligence Analyst, Security Operations Center (SOC) Analyst

With cyber-attack rates accelerating, organizations seek professionals who can detect and respond to threats in real time. CySA+ demonstrates these advanced skills.

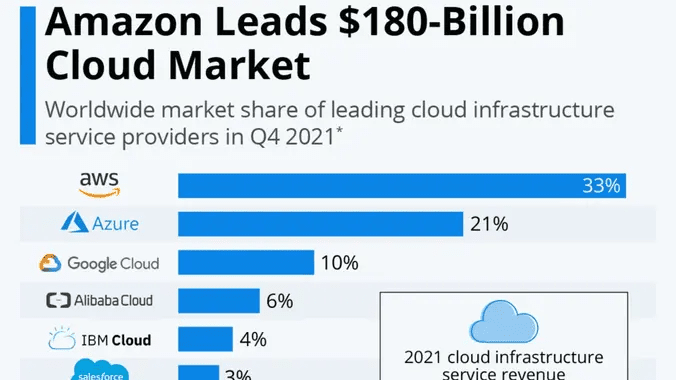

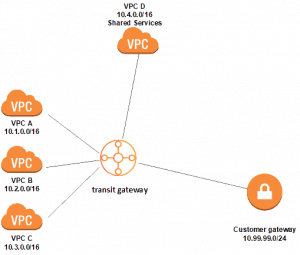

5. CompTIA Cloud+

Cloud computing expertise is in high demand as businesses migrate their operations to the cloud.

CompTIA Cloud+ certification validates your ability to manage and secure cloud environments efficiently.

- Key Takeaways: Cloud architecture, deployment, security, automation, and troubleshooting.

- Exam Code: CV0-003

- Job Roles: Cloud Engineer, Cloud Administrator, Systems Engineer

Earners of this credential prove their readiness for hybrid and multi-cloud environments, making them attractive to future-focused organizations.

How to Choose the Right CompTIA Certification

Selecting the right certification depends on your experience level, career interests, and long-term goals. Here are some tips to guide your decision:

- Entry-Level Professionals: Start with CompTIA A+ and progress to Network+ or Security+.

- Networking Enthusiasts: Pursue CompTIA Network+ to build strong network troubleshooting and management skills.

- Cybersecurity Aspirants: After Security+, CySA+ is an excellent path to specialized security roles.

- Cloud Career Seekers: Choose Cloud+ to stay ahead in today’s evolving IT infrastructure landscape.

- Continuous Learning: Consider stackable credentials by combining multiple CompTIA certifications.

Tips for Passing CompTIA Exams

Preparing for a CompTIA exam requires dedication and a strategic approach. Follow these best practices:

- Study the Exam Objectives: Download and review official exam blueprints.

- Join Study Groups: Collaborate with peers for shared knowledge and tips.

- Use Practice Tests: Simulate the actual testing environment and identify weak areas.

- Hands-On Labs: Gain real-world experience through labs or simulations.

- Set a Study Schedule: Consistency is key to retaining information.

The Future of CompTIA Certifications

As technological advancements continue to reshape the IT landscape, CompTIA remains committed to

evolving its certifications. Expect future updates to include greater emphasis on automation,

artificial intelligence, and cloud-native security solutions.

Conclusion

In 2025, staying relevant and competitive in the IT industry will require up-to-date skills

and certifications. The top five CompTIA certifications outlined above are

among the most valued by employers and can empower you to take the next step in your career.

Invest in your professional development by pursuing one or more of these certifications—and

prepare to unlock exciting new opportunities in the dynamic world of IT.

Ready to boost your career? Start your journey with CompTIA today!

“`