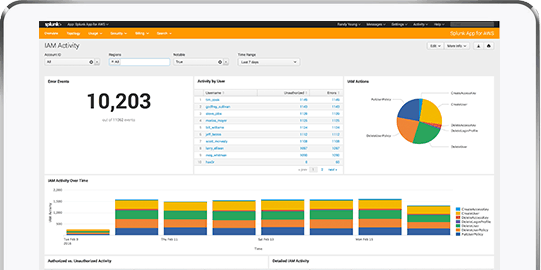

AWS CloudTrail

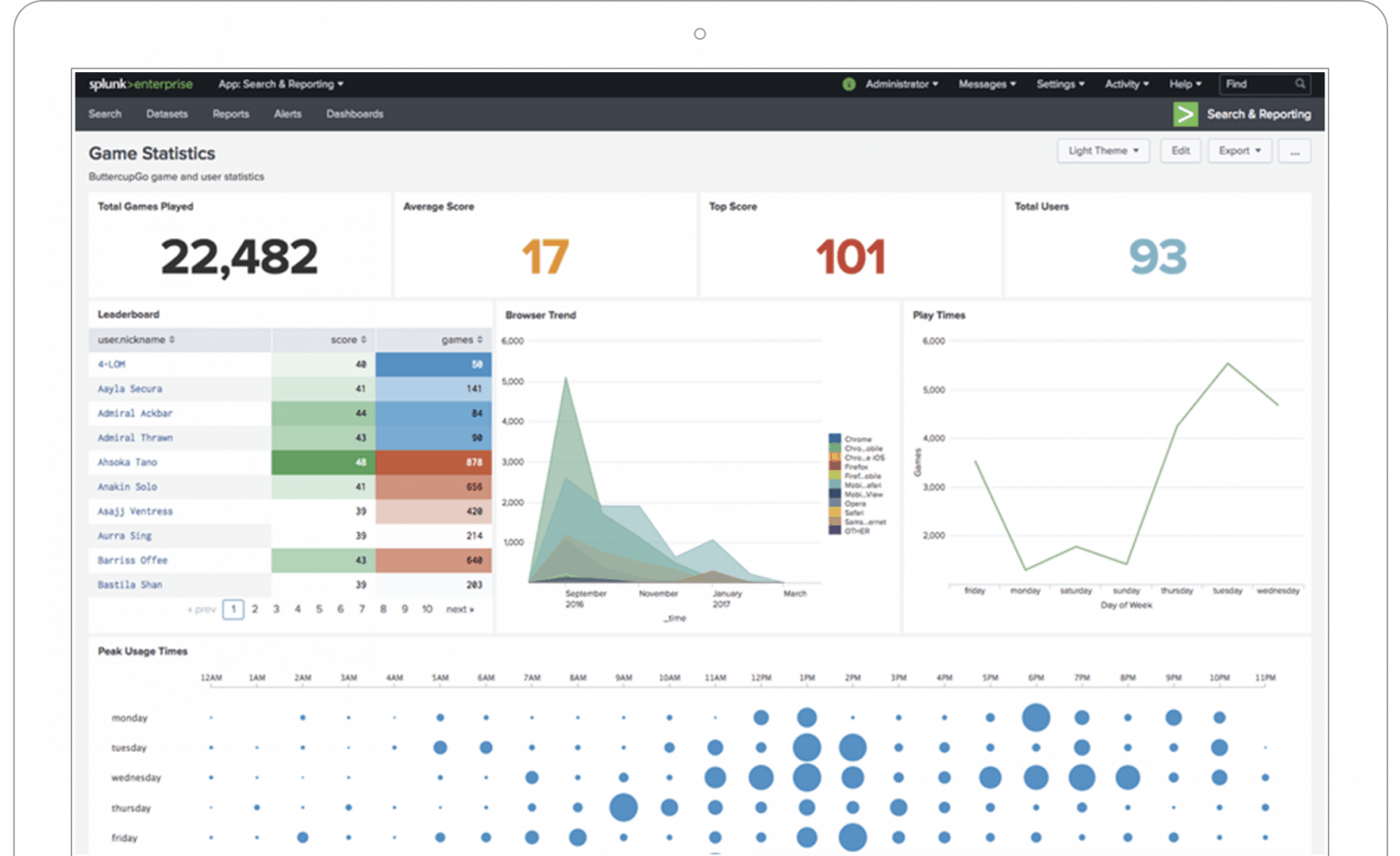

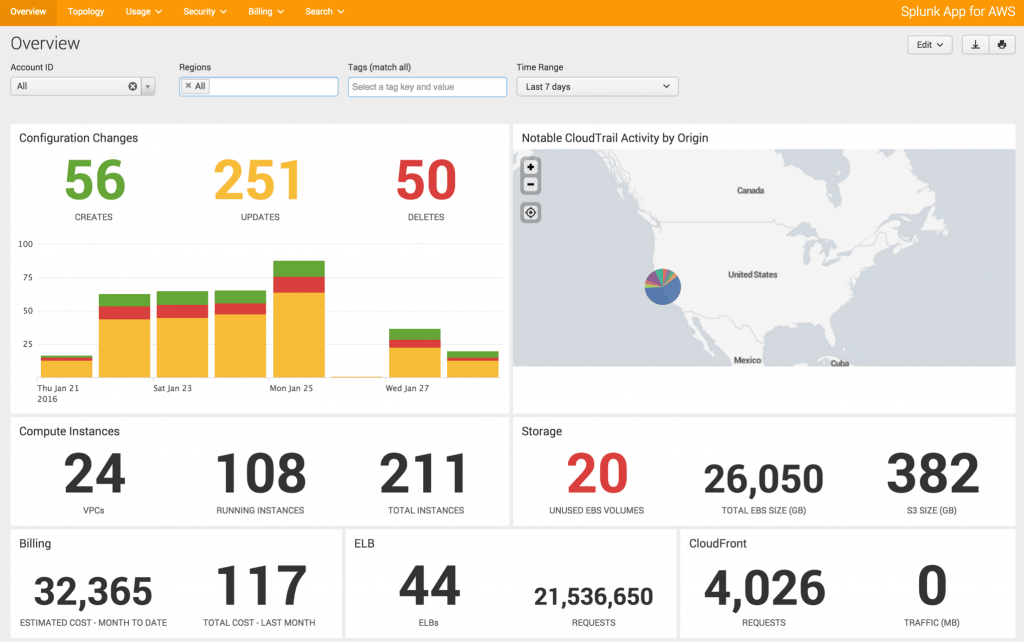

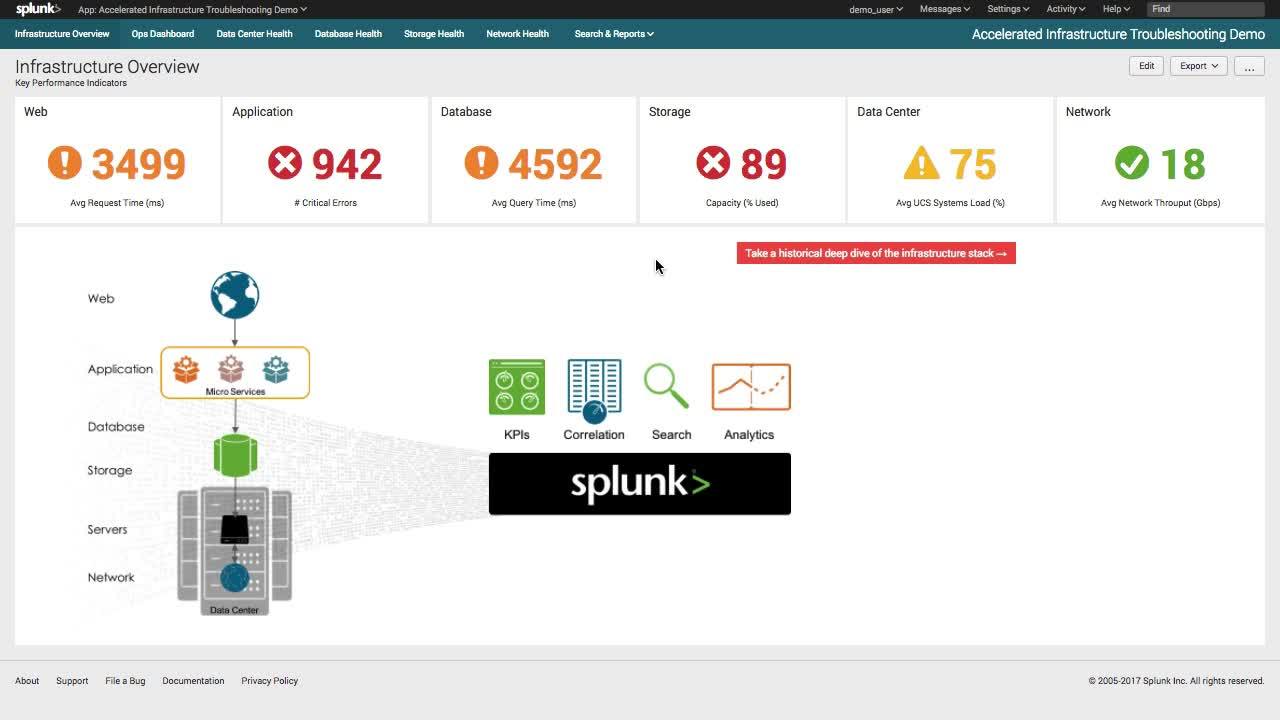

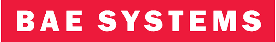

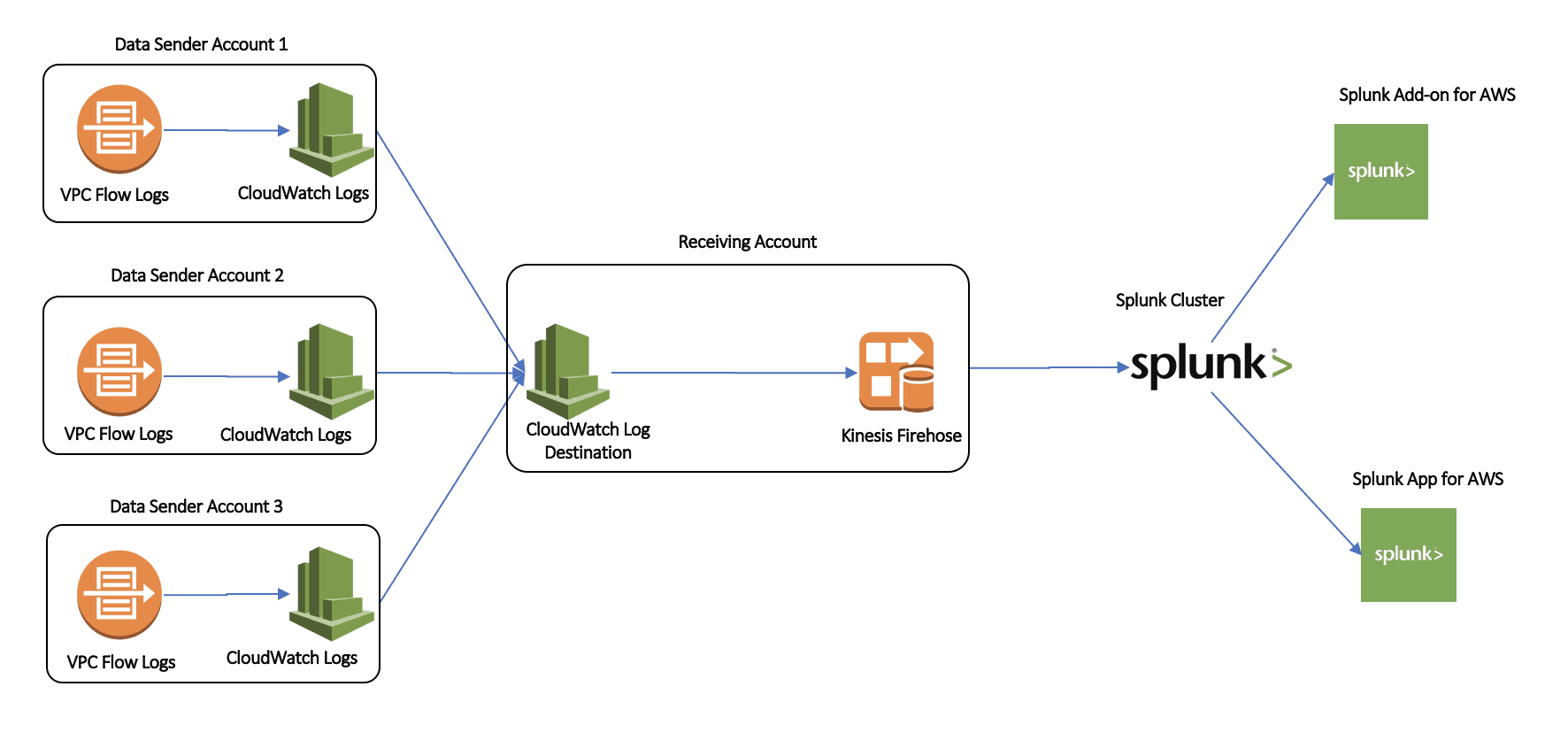

About the Splunk App for AWS. The Splunk App for AWS gives you critical operational and security insight into your Amazon Web Services account. The app includes: A pre-built knowledge base of dashboards, reports, and alerts that deliver real-time visibility into your environment.

AWS Config with Splunk

In addition to displaying Amazon CloudWatch logs and metrics in Splunk dashboards, you can use AWS Config data to bring security and configuration management insights to your stakeholders. The current recommended way to get AWS Config data to Splunk is a pull strategy.

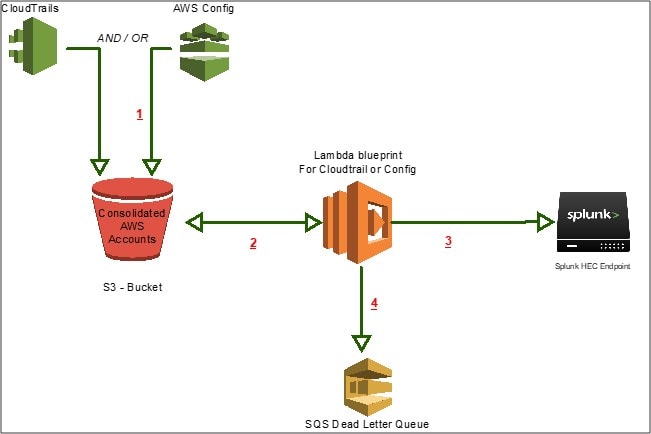

AWS Config

AWS Config is a service that enables you to assess, audit, and evaluate the configurations of your AWS resources. Config continuously monitors and records your AWS resource configurations and allows you to automate the evaluation of recorded configurations against desired configurations.

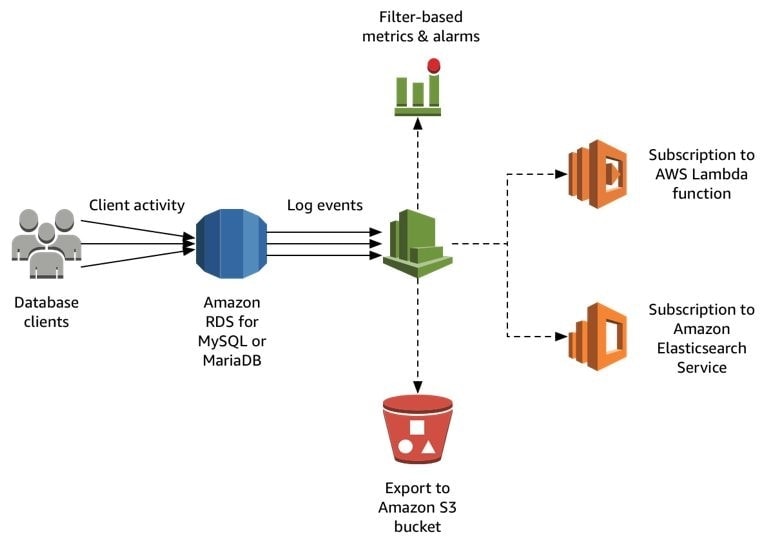

Amazon RDS

Amazon Relational Database Service (Amazon RDS) makes it easy to set up, operate, and scale a relational database in the cloud. It provides cost-efficient and resizable capacity while automating time-consuming administration tasks such as hardware provisioning, database setup, patching and backups.

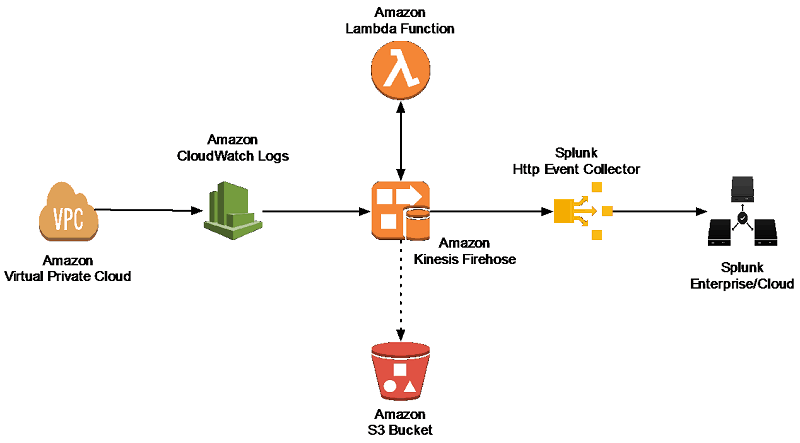

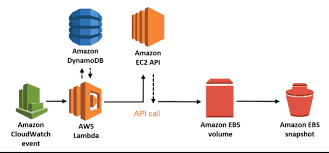

Amazon CloudWatch

Amazon CloudWatch is a monitoring service for AWS cloud resources and the applications you run on AWS. You can use Amazon CloudWatch to collect and track metrics, collect and monitor log files, set alarms, and automatically react to changes in your AWS resources.

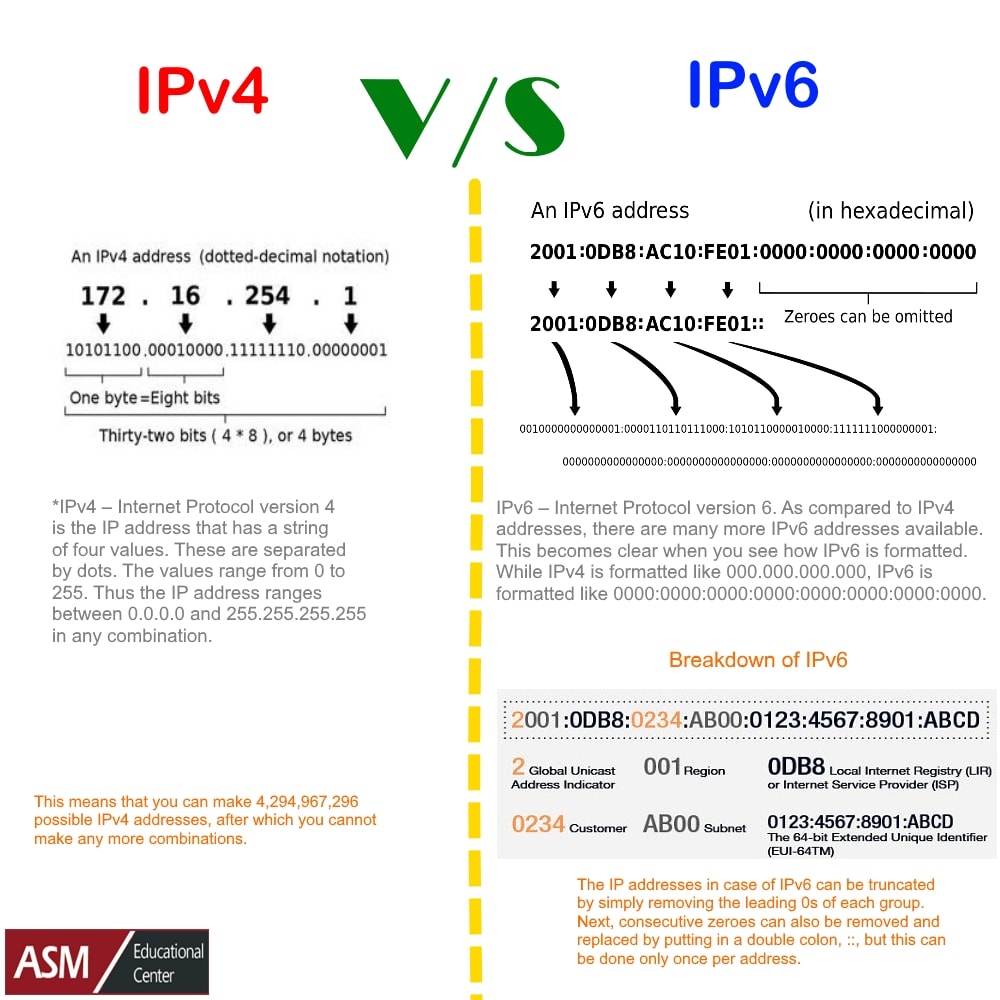

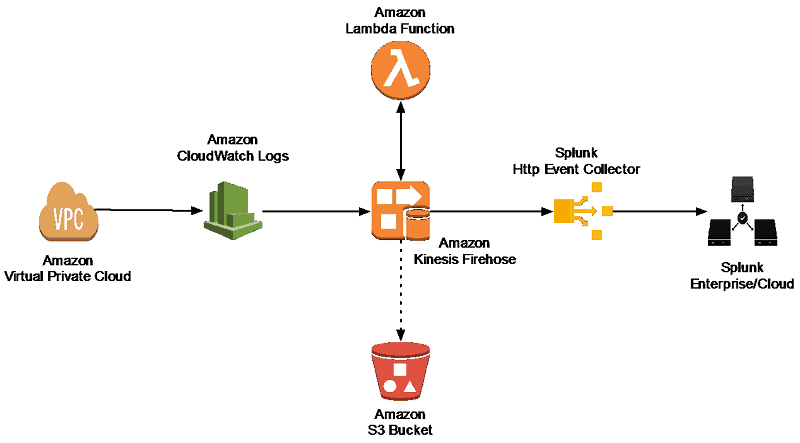

Amazon VPC Flow Logs

VPC Flow logging lets you capture and log data about network traffic in your VPC. VPC Flow logging records information about the IP data going to and from designated network interfaces, storing this raw data in Amazon CloudWatch where it can be retrieved and viewed.

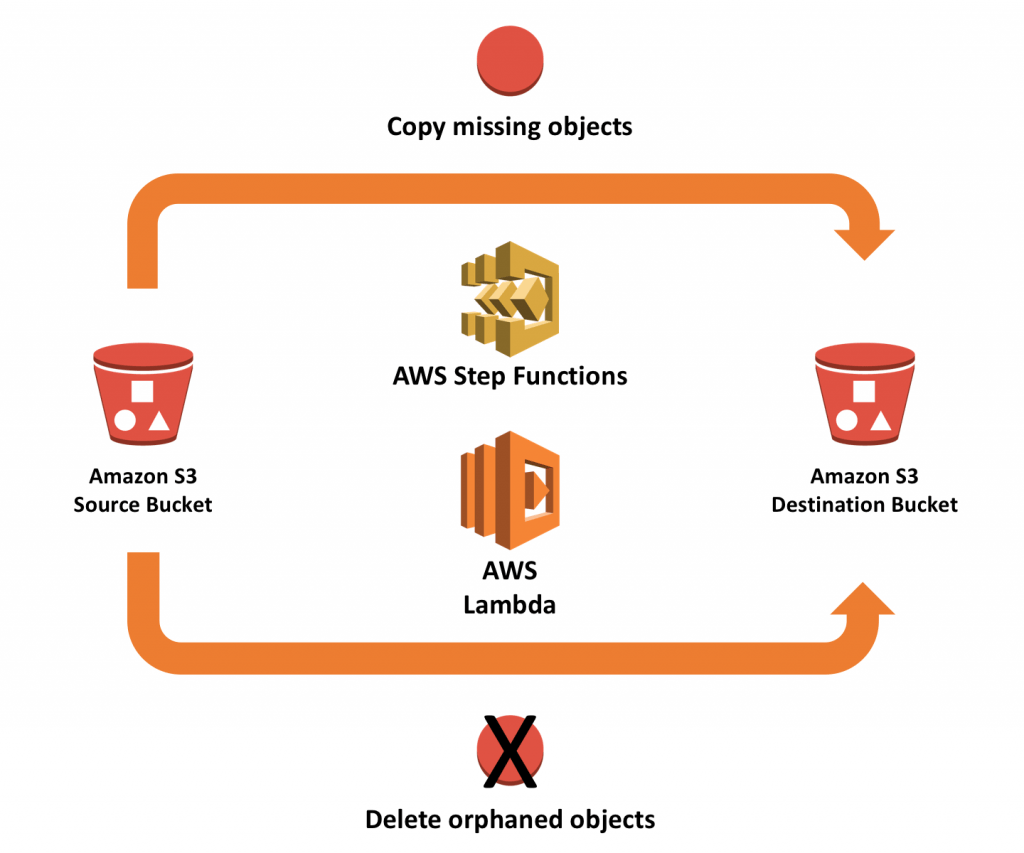

Amazon S3

Amazon S3 has a simple web services interface that you can use to store and retrieve any amount of data, at any time, from anywhere on the web. It gives any developer access to the same highly scalable, reliable, fast, inexpensive data storage infrastructure that Amazon uses to run its own global network of web sites.

Amazon EC2

Amazon Elastic Compute Cloud (Amazon EC2) is a web service that provides secure, resizable compute capacity in the cloud. It is designed to make web-scale cloud computing easier for developers. Amazon EC2’s simple web service interface allows you to obtain and configure capacity with minimal friction.

Amazon CloudFront

Amazon CloudFront is a web service that gives businesses and web application developers an easy and cost effective way to distribute content with low latency and high data transfer speeds. Like other AWS services, Amazon CloudFront is a self-service, pay-per-use offering, requiring no long term commitments or minimum fees. With CloudFront, your files are delivered to end-users using a global network of edge locations.

Amazon EBS

Amazon Elastic Block Store (EBS) is an easy to use, high performance block storage service designed for use with Amazon Elastic Compute Cloud (EC2) for both throughput and transaction intensive workloads at any scale.

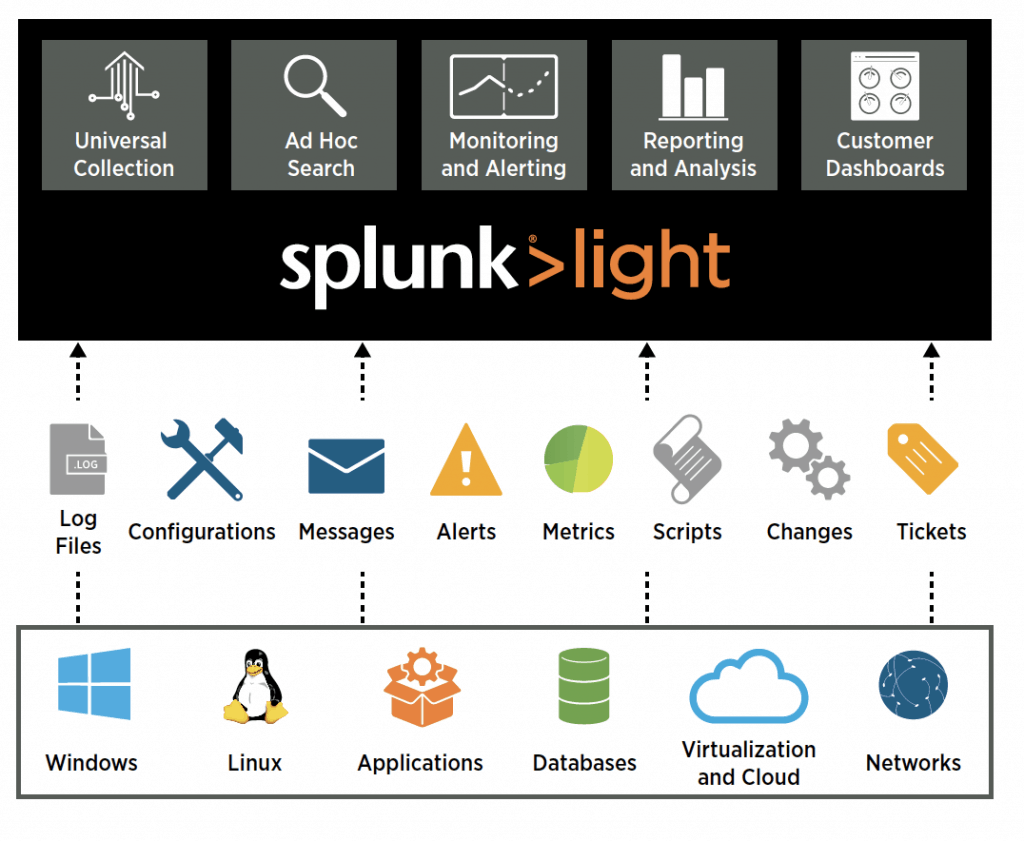

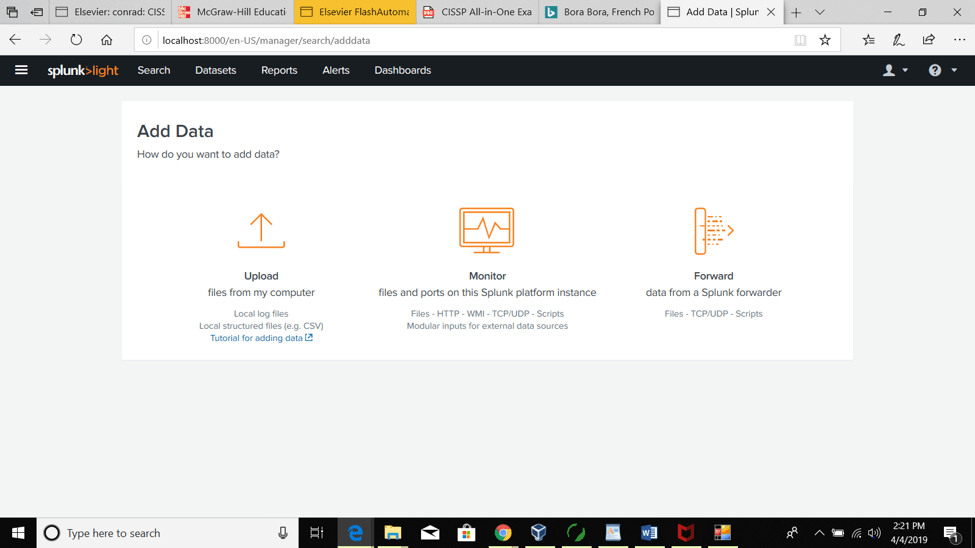

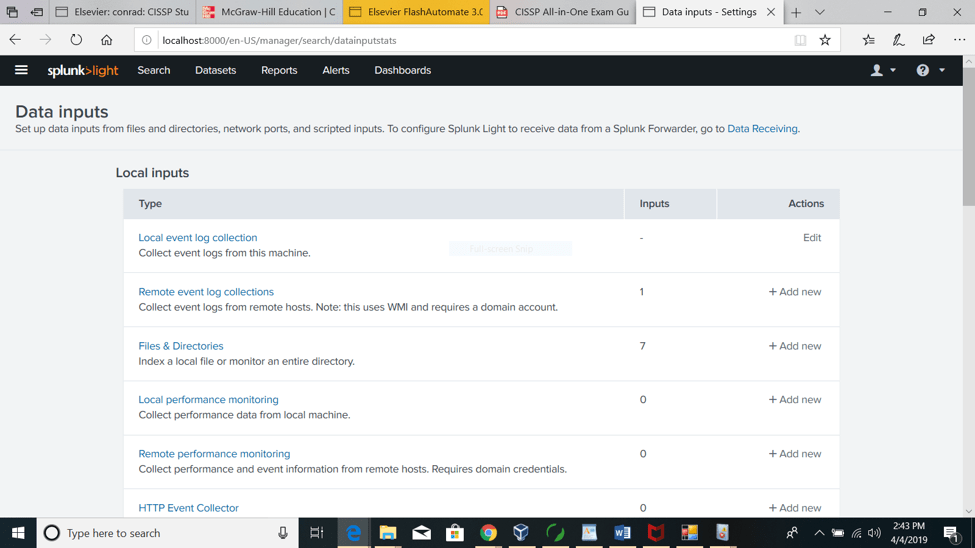

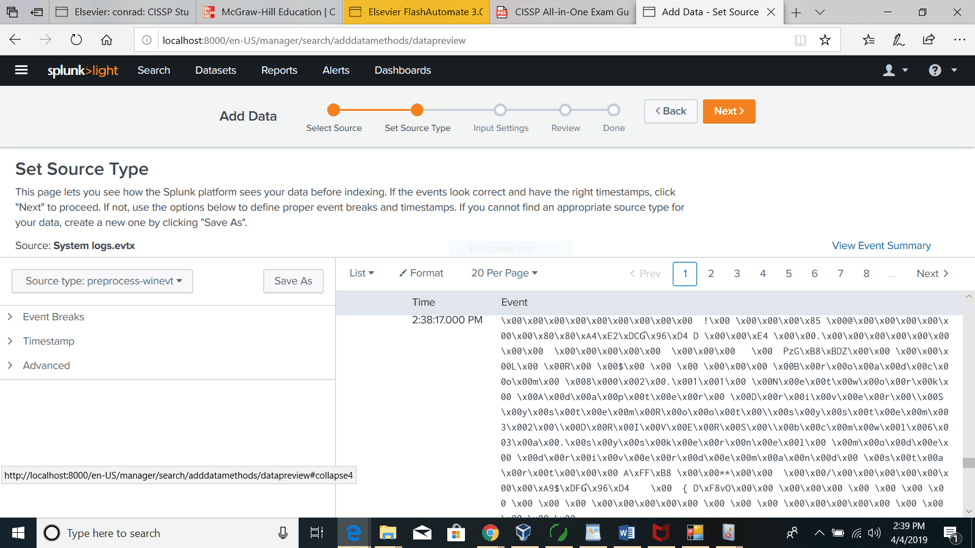

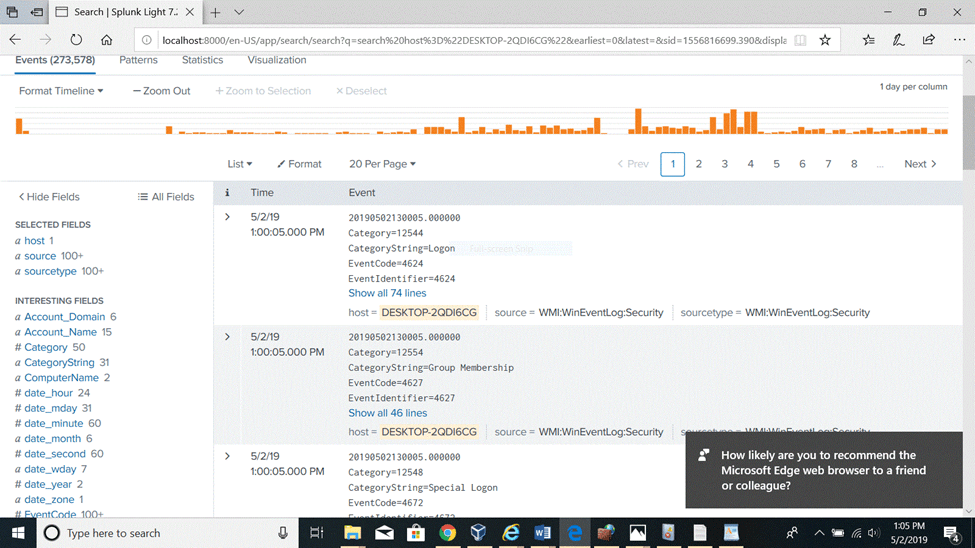

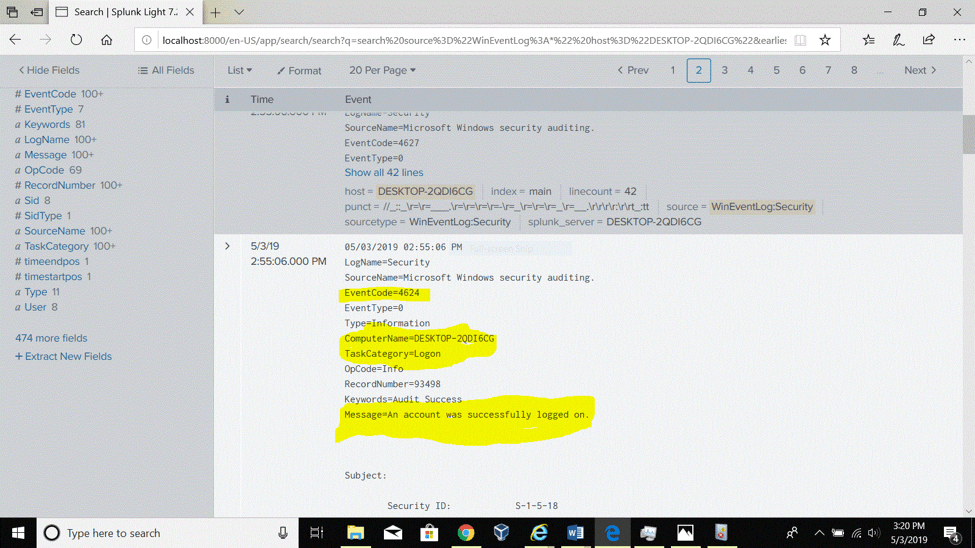

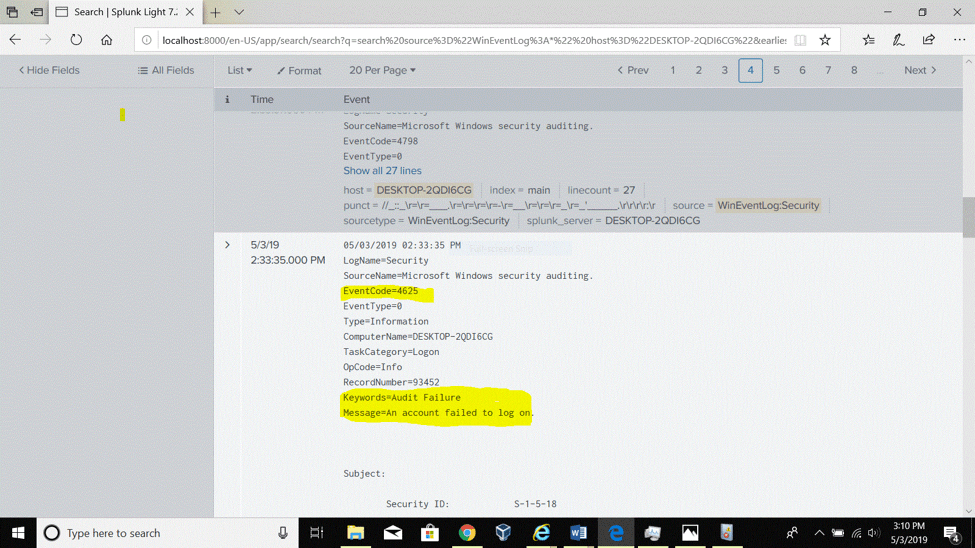

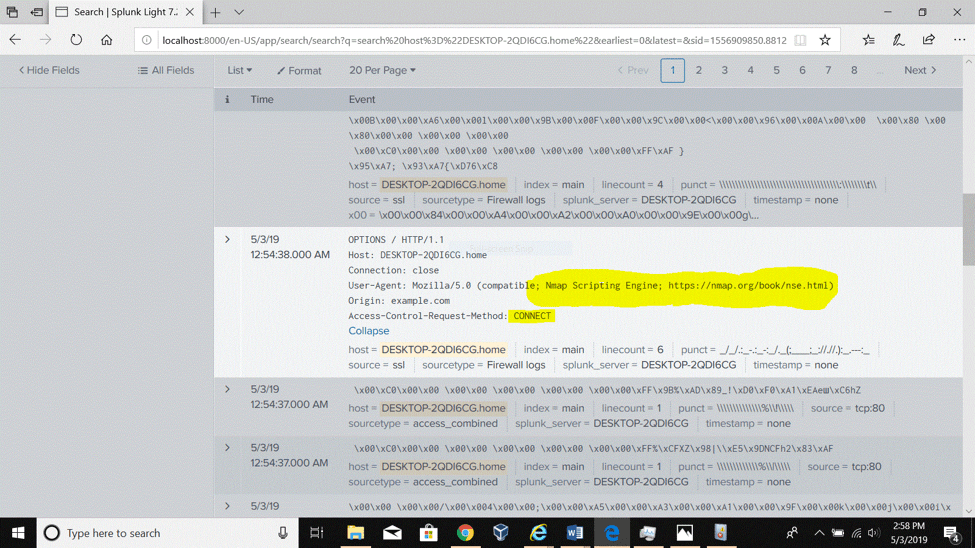

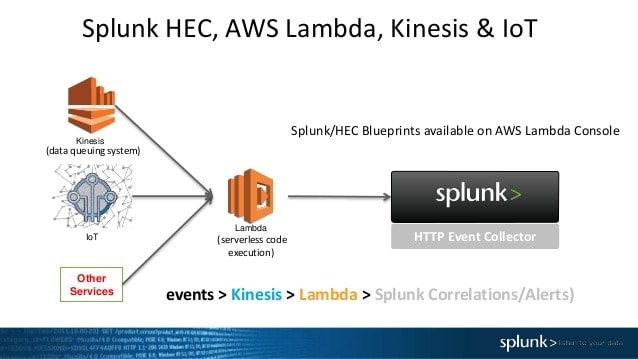

Source: Splunk